In the previous article we established the core distinction between Skill and Subagent: Skill manages knowledge loading, Subagent manages Context isolation. But we left one dilemma unresolved:

High token volume and interactive — what do you do?

The Official Recommendation: Explore First, Then Plan, Then Execute

Anthropic’s Claude Code Best Practices [1] actually describes a pattern very close to this:

Explore first, then plan, then code. Separate research and planning from implementation to avoid solving the wrong problem.

And the official docs explicitly recommend using Subagents for the exploration phase [1]:

Use subagents for investigation… When Claude researches a codebase it reads lots of files, all of which consume your context. Subagents run in separate context windows and report back summaries.

So the official guidance is: separate exploration from decision-making — use a Subagent to isolate the exploration, keep decision-making in the main conversation. Building on that idea, if we bring in Skill’s knowledge loading, a practical workflow looks like this:

Subagent"] D["Decide (low token volume, interaction needed)

Main conversation + Skill"] X["Execute (high token volume, no interaction needed)

Subagent + preloaded Skill"] E -- "returns summary" --> D D -- "decision confirmed" --> X

Exploration is the heavyweight phase — hand it to a Subagent to run in an isolated environment and return a concise summary. Decision-making is lightweight but interactive — keep it in the main conversation, using the Skill’s knowledge framework to reason through the choice. Once the decision is made, dispatch a Subagent carrying that knowledge to handle execution.

A concrete example — “redesign the database schema”:

| Phase | Approach | Why |

|---|---|---|

| Investigate the current schema | Subagent | Requires reading many files; high output volume; no interaction needed |

| Discuss the design direction | Main conversation + Skill (design standards) | Requires back-and-forth, but only the summary is present, so token count is small |

| Run the migration | Subagent + preloaded Skill | Heavy execution, but needs to carry the design knowledge along |

No phase simultaneously hits both “high token volume” and “needs interaction” at once.

Two Combination Directions

Beyond splitting phases, Skill and Subagent can be combined in two more direct ways. Both directions are explicitly documented in the Claude Code official docs [2][3]:

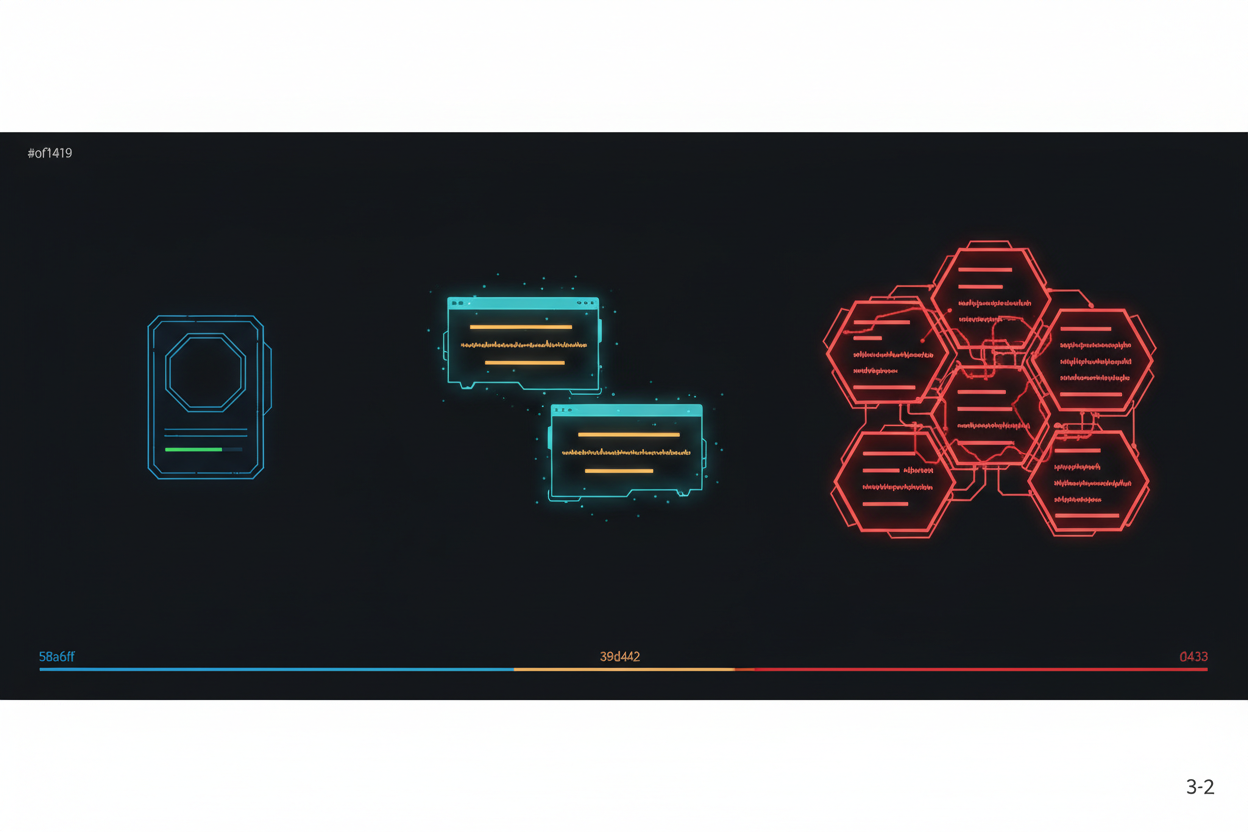

Direction 1: Skill runs inside a Subagent (context: fork)

When executing a Skill would generate a large number of tokens, run the Skill inside an isolated environment. Knowledge comes from the Skill; isolation comes from the Subagent — you get the advantages of both. The Skill defines the task content, and you choose an agent type to execute it [2].

Direction 2: Subagent preloads a Skill (skills field)

Going the other way: when a Subagent needs specialized knowledge, preload the Skill at startup. The Subagent controls the system prompt, and the Skill content is fully injected when the Subagent launches [3].

The difference between the two directions:

context: fork → Skill defines the task (static), agent type executes → high predictability

skills field → Subagent defines the task (dynamic), Skill provides knowledge → high flexibility

One important detail: Subagents do not automatically inherit Skills from the parent conversation — you must declare them explicitly. This behavior is hardwired in the official docs [3]. It’s actually good design — going back to the principle from Article 3, anything you don’t need shouldn’t be loaded at all.

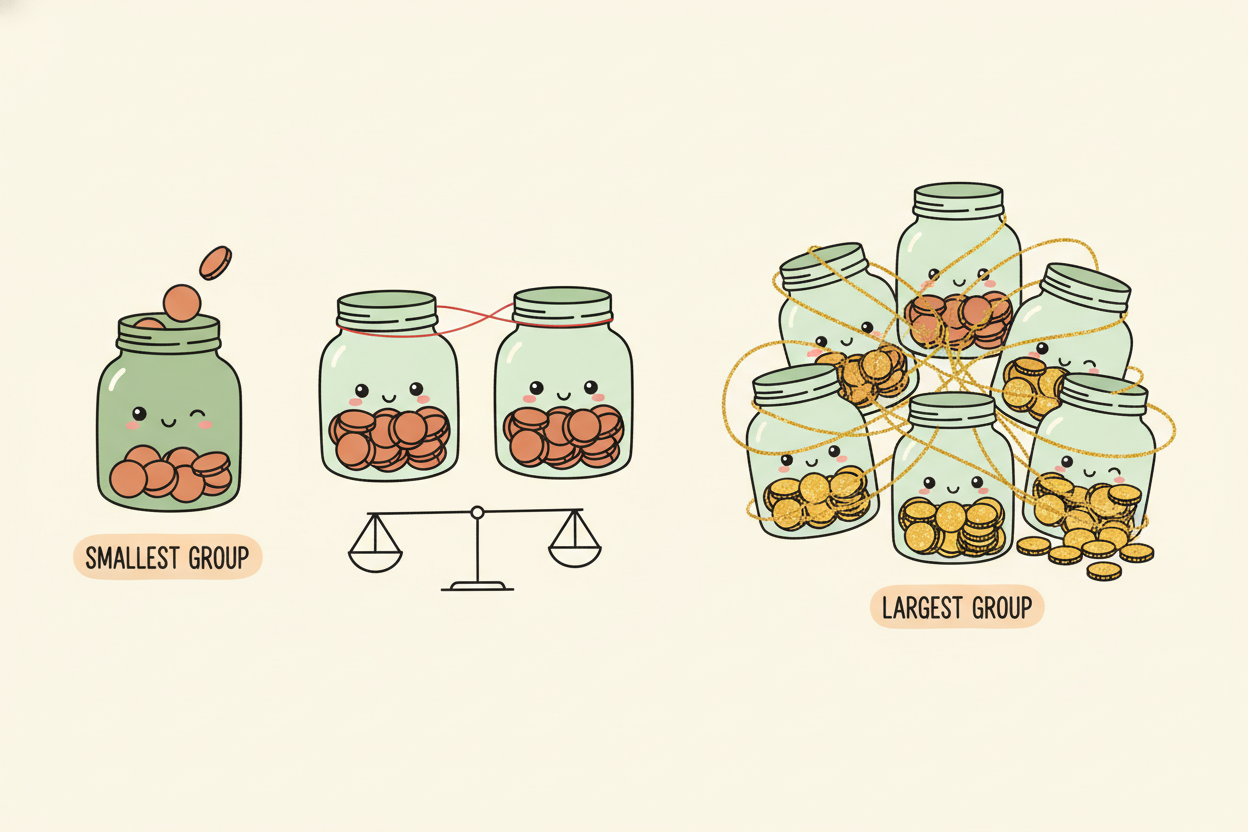

Cost Is Also a Decision Factor

Up to this point we’ve been talking about Context — what to load, what to isolate. But in practical use in 2026, cost has become just as important a consideration as Context.

Every additional Subagent you spin up means opening another Context Window. Anthropic’s research data shows [4]:

- Agent mode consumes roughly 4x the tokens of a regular conversation

- Multi-Agent systems consume roughly 15x the tokens of a regular conversation

Subagents aren’t free. They solve the Context isolation problem, but the price is significantly higher token consumption. Conversely, running a Skill inline — while it does occupy the main Context — doesn’t require opening a new Context Window, which keeps costs lower.

This means the decision model needs one more dimension:

lowest cost"] Q2 -- "Very large" --> A2["Split into phases

Explore → Decide → Execute"] Q3 -- "No" --> A3["Subagent

medium cost"] Q3 -- "Yes" --> A4["Subagent + preloaded Skill"]

Context Engineering isn’t just about managing where tokens flow — it’s also about managing what tokens cost. Isolation isn’t free; every act of isolation is a token expenditure. So the best approach isn’t “isolate everything” — it’s isolating only when it’s worth it.

Back to the Framework

Placing this discussion back into the five-layer architecture from Article 2:

┌───────────────────────────────────────────────┐

│ Command (entry point) │

├───────────────────────────────────────────────┤

│ Agent (orchestrator) │

│ ├── decides whether to use Skill or Subagent │

│ ├── decides when to load, when to isolate │

│ └── this is Context Engineering │

├───────────────────────────────────────────────┤

│ Tool (execution) Skill (knowledge) │

│ ↕ can be preloaded by │

│ Subagent │

│ Subagent (isolated execution) │

│ └── has its own Tool + Skill + Context │

├───────────────────────────────────────────────┤

│ Context (domain knowledge) │

└───────────────────────────────────────────────┘Skill lets knowledge load on demand; Subagent lets execution run in isolation. Together they provide precise control over Context along two separate dimensions. It’s not “use Skill for simple tasks, Subagent for complex ones” — it’s: keep what should stay, isolate what should be isolated, combine what should be combined.

References

[1] Anthropic: “Best Practices for Claude Code” — “Explore first, then plan, then code”; use Subagents for investigation to protect the main Context https://code.claude.com/docs/en/best-practices

[2] Anthropic: “Extend Claude with Skills” — Two combination approaches: use context: fork when the Skill defines the task; use the skills field when a Subagent needs to preload a Skill

https://code.claude.com/docs/en/skills

[3] Anthropic: “Create Custom Subagents” — “Use the skills field to inject skill content into a subagent’s context at startup”; “Subagents don’t inherit skills from the parent conversation; you must list them explicitly”

https://code.claude.com/docs/en/sub-agents

[4] Anthropic: “How We Built Our Multi-Agent Research System” — “agents typically use about 4x more tokens than chat interactions, and multi-agent systems use about 15x more tokens” https://www.anthropic.com/engineering/multi-agent-research-system

[5] Zenn: “Skill context:fork vs Sub-agent skills Field” — context: fork offers fixed, predictable task definition; skills field offers dynamic flexibility

https://zenn.dev/trust_delta/articles/claude-code-skills-subagents-approaches

But there’s a scenario where Subagents aren’t enough either

Up to this point, Subagents have followed one pattern: the main Agent dispatches them, they complete the work, they come back. One-to-one, hub-and-spoke.

But what happens when the task looks like this?

You need 3 Subagents to simultaneously research different directions — and their results need to reference each other.

Subagent A’s findings might redirect Subagent B’s investigation. B’s conclusions might overturn C’s assumptions. “Dispatch, complete, return” can’t handle this — you need collaboration.

But Subagents don’t share a Context, and they can’t message each other. The official docs are explicit: Subagents can only report back to the parent; they cannot communicate with other Subagents.

And there’s an even more practical concern: if a Multi-Agent system consumes 15x the tokens of a regular conversation, then when you need multiple Agents working together, cost management becomes just as critical a problem as Context management.

That’s exactly what Agent Team is designed to solve — but that’s the next article.

Support This Series

If these articles have been helpful, consider buying me a coffee