Contents

- The Subagent Ceiling: Hub-and-Spoke

- Agent Team: From Hub-and-Spoke to Mesh

- Understanding the Three Patterns Through Context Flow

- Skill — Context Coexistence

- Subagent — Context Isolation, One-Way Reporting

- Agent Team — Context Isolation, With Communication Channels

- But Agent Team Is Expensive

- The Full Decision Spectrum

- From Article 1 to Article 7

- A Final Thought

- References

Over the first six articles we traveled from Model ≠ Runtime all the way to the Skill + Subagent combination pattern. Along the way, something significant has been happening: Skills no longer belong to any single vendor.

In late 2025, Anthropic opened the Agent Skills specification as an open standard [4]. OpenAI’s Codex CLI adopted the same format. At the same time, Anthropic donated MCP to the Linux Foundation’s Agentic AI Foundation, with OpenAI and Block joining as co-founders.

Looking back at the prediction in Article 4: the Skill ecosystem competition has already begun. But the direction isn’t fragmentation — it’s standardization. The Tool layer has MCP; the Skill layer is starting to have a shared format. This means what Article 4 described — “whoever builds the Skill ecosystem first wins” — is playing out in real life, except not through each vendor going their own way, but through everyone racing to define the standard.

That said, standardization solves the portability of knowledge. When a task requires not just knowledge but collaboration between multiple Agents, we run into a new architectural problem.

The Subagent Ceiling: Hub-and-Spoke

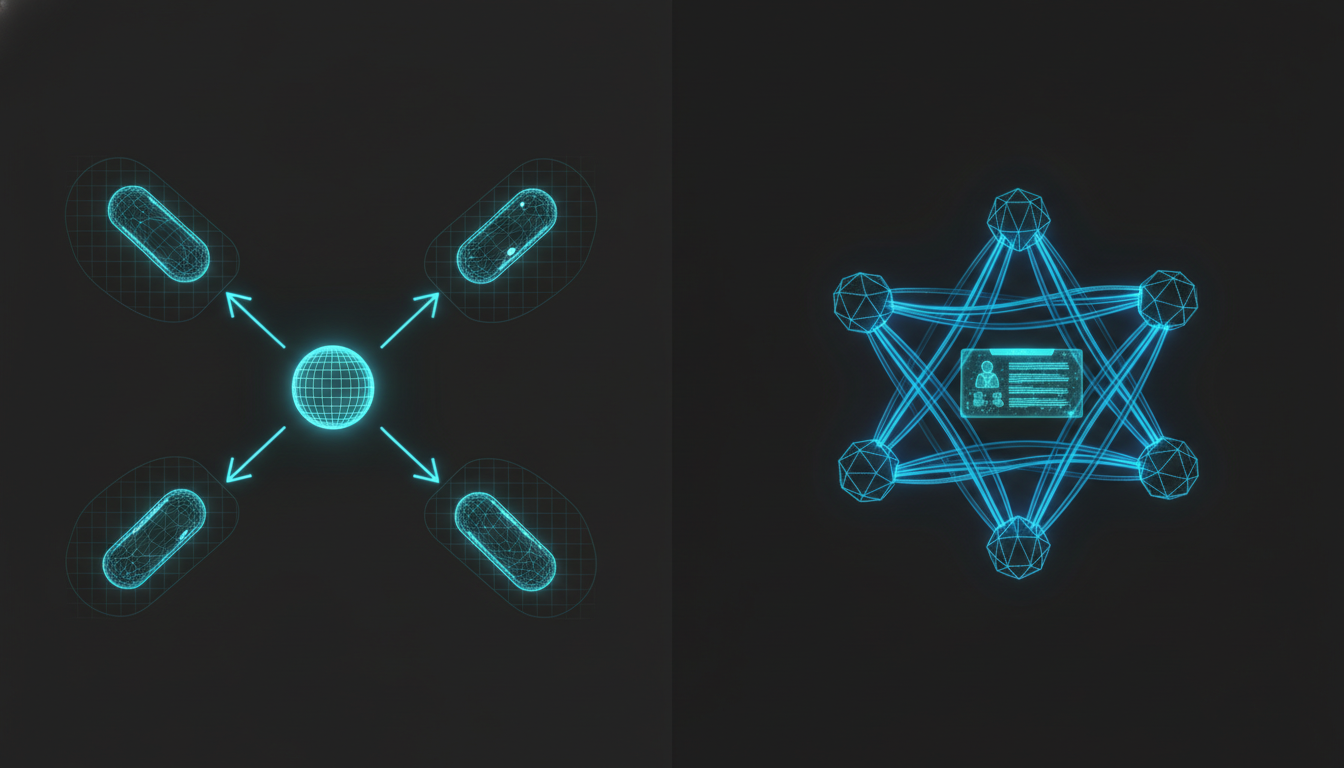

At the end of Article 6 we noted that Subagents follow a one-to-one pattern: the main Agent dispatches them, the Subagent finishes, and it reports back. All communication goes through the parent [5].

This pattern is sufficient in most situations. But there is one scenario it cannot handle:

Sub A discovers a critical piece of information that would change the direction of Sub B’s research.

In the hub-and-spoke model, A must first report to Main, and Main must then decide whether to notify B. But Main’s Context Window is already busy processing reports from all three Subagents [6] — it may not even notice that A’s discovery has implications for B.

The official docs are explicit about this [1]:

Subagents cannot spawn other subagents.

There is no communication channel between Subagents. They cannot message each other; each one runs in complete isolation. When what you need is not “send someone to do a job” but “have people work together,” Subagents hit their limit.

Agent Team: From Hub-and-Spoke to Mesh

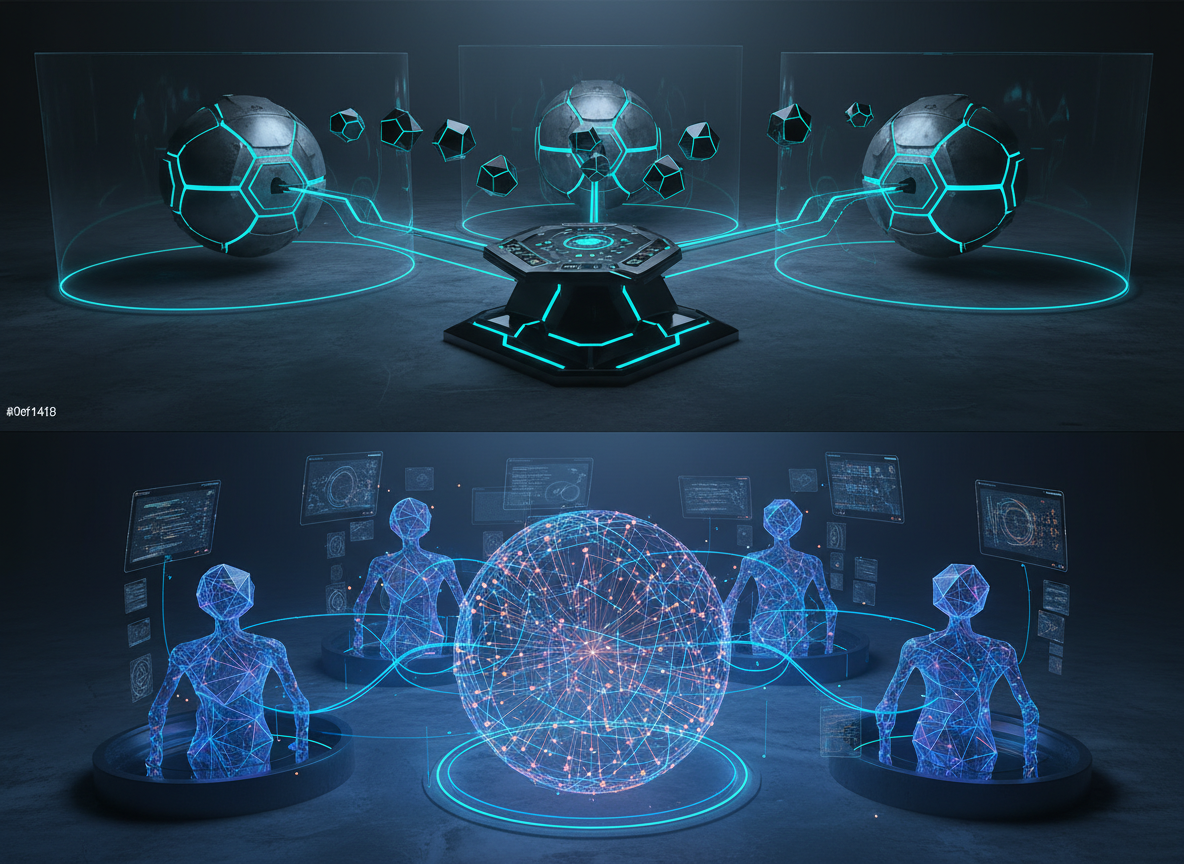

In February 2026, Anthropic launched Agent Teams [1] (currently an experimental feature). It solves exactly the problem of Subagents being unable to collaborate with one another.

Put in an analogy: Subagents are contractors you send out — give them a task, they finish it and deliver a report. Agent Team is your project team — members hold meetings, update each other on progress, and adjust their direction based on each other’s findings.

One important thing to understand though: Agent Team does not mean “sharing a Context Window.”

Understanding the Three Patterns Through Context Flow

In Article 5 we used Context flow to distinguish Skill from Subagent. The same framework applies directly to Agent Team. Let’s put all three side by side:

Skill — Context Coexistence

One Context Window, with knowledge living inside it. The benefit is immediate interaction; the cost is a Context that keeps getting more crowded.

Subagent — Context Isolation, One-Way Reporting

Two Context Windows, completely isolated. When the Subagent finishes, it reports a summary back to the parent in one direction. The parent never sees the intermediate steps, and Subagents cannot see each other.

Agent Team — Context Isolation, With Communication Channels

(shared state, not shared Context)"] A <-- "mailbox (concise messages)" --> B A <--> STL B <--> STL

Each teammate still has its own independent Context Window — the same as with Subagents, Context remains isolated. But the difference is two additions:

Mailbox (bidirectional message channel): teammates can send messages directly to each other without going through the parent. Note that this is not dumping an entire Context onto the other agent — it sends concise, structured messages. The underlying idea is the same as the Subagent Return Contract from Article 6 (explore deep, return shallow), except the direction changes from one-way to two-way.

Shared Task List (shared state): all teammates can see the same task list — who is doing what, what is complete, what is still unclaimed. This is not Context sharing, it’s state sharing. Each agent’s reasoning still lives in its own Context Window, but through the task list, everyone knows the overall progress.

So the Context architecture of Agent Team can be understood like this:

Isolated reasoning, connected communication, shared state.

Compared to Subagents, Agent Team doesn’t “break down isolation” — it builds communication channels and a coordination layer on top of isolation. The Context doesn’t get bigger; what changes is how information flows.

But Agent Team Is Expensive

Communication channels and a coordination layer are not free. Every mailbox message, every task list update, costs tokens.

Anthropic’s research data [2]:

- Agent mode consumes roughly 4x the tokens of a regular conversation

- Multi-Agent systems consume roughly 15x the tokens of a regular conversation

- Real-world example [3]: 16 agents collaborating to build a C compiler, API cost $20,000

15x is not a small number. If a Subagent task costs $0.10, the same work with Agent Team might cost $1.50. This isn’t to say Agent Team is bad — it’s that you have to ask yourself: does this task actually require Agents to communicate with each other?

Most tasks don’t. Most tasks are fine with a single Agent and a good Skill (Anthropic’s recommendation from Article 2). When isolation is needed, use Subagent. Only when a task genuinely requires multiple Agents to collaborate with results that depend on each other is it worth spinning up an Agent Team.

The Full Decision Spectrum

Putting all the tools from seven articles together:

Skill (inline) → Subagent → Agent Team

Cost: low medium high (15x)

Context: coexists isolated isolated + communication channels

Interaction: immediate one-way report bidirectional messages

Coordination: none parent-managed shared task list

Best for: knowledge loading isolated tasks complex collaborative tasksThis is not a spectrum where “further right is always better.” Each position has the scenario it fits best. Choosing the wrong position doesn’t just mean “not good enough” — it means paying for tokens you shouldn’t be spending or stuffing a Context that shouldn’t be stuffed.

From Article 1 to Article 7

Let’s take stock of the road we’ve traveled:

- Model ≠ Runtime — Skills live in the Runtime, not the Model

- Five-layer architecture — Command → Agent → Tool + Skill → Context

- Context Engineering — JIT, Token budgets, Progressive Disclosure

- Skill ecosystem — competition is shifting from Model to Skill, and it’s standardizing

- Skill vs Subagent — the core distinction is Context flow

- Combination patterns — phase splitting, fork, preload, plus cost considerations

- Agent Team — isolated reasoning, connected communication, shared state

The single thread running through all seven articles is this: every decision ultimately comes back to Context management and cost control.

A Final Thought

By this point you might feel like there’s a “right answer” — use Skill for this scenario, Subagent for that one, Agent Team for another. But in practice there is no standard answer.

We are always pulling between two things:

Cost and Performance.

The value-for-money mindset that engineers everywhere care about applies just as much in the world of AI Agents. Isolating a Subagent protects the Context, but costs more tokens. Opening an Agent Team enables collaboration, but at 15x the cost. Behind every architectural decision is a cost-performance calculation.

At the same time we are navigating between Theory and Practice. These seven articles have established a lot of principles — Context flow, Progressive Disclosure, Subagent Return Contract. These principles are right most of the time, but they are not dogma.

Skill, Subagent, Agent Team — none of them is absolutely right or absolutely wrong. When you are actually solving a problem, theory is in service of the practical. Principles can be broken, but when you break them, you should know clearly what Cost/Performance ratio you are willing to accept.

So when you start building your AI team, don’t ask “which architecture is most correct” — ask instead:

Given my constraints, does this choice deliver enough value?

That is the essence of AI Agent architecture — just like all engineering: making the best tradeoffs within your constraints.

References

[1] Anthropic: “Orchestrate Teams of Claude Code Sessions” — The official comparison: Subagents only report back to the main Agent; Agent Team members can send messages directly to each other https://code.claude.com/docs/en/agent-teams

[2] Anthropic: “How We Built Our Multi-Agent Research System” — “multi-agent systems use about 15x more tokens than chats”; lead agent cannot guide subagents; subagents cannot coordinate with each other https://www.anthropic.com/engineering/multi-agent-research-system

[3] Anthropic Engineering: “Building a C Compiler with Parallel Claudes” — Real-world case study and cost data from 16 agents collaborating to build a C compiler https://www.anthropic.com/engineering/building-c-compiler

[4] SiliconANGLE: “Anthropic Makes Agent Skills an Open Standard” — Coverage of Anthropic opening the Agent Skills specification https://siliconangle.com/2025/12/18/anthropic-makes-agent-skills-open-standard/

[5] LangChain: “Choosing the Right Multi-Agent Architecture” — In the Supervisor pattern (equivalent to Subagent), all communication must pass through the supervisor https://blog.langchain.com/choosing-the-right-multi-agent-architecture/

[6] Factory.ai: “The Context Window Problem” — Enterprise monorepos still exceed the 1M token window; naive “stuff everything” approaches are unreliable and prohibitively expensive https://factory.ai/news/context-window-problem

Support This Series

If these articles have been helpful, consider buying me a coffee