Contents

- The Complete AI Agent Stack

- MCP Solves Half the Problem

- Skill Portability: The Hard Part

- 1. Where to find the Skill

- 2. How to load the Skill

- 3. How to coordinate with Tools

- This Looks a Lot Like Early Mobile Apps

- Three Levels of Competition

- Model Layer: Competing on Reasoning Capability

- Runtime Layer: Competing on Developer Experience

- Skill Layer: Competing on Knowledge Ecosystem

- Where Things Stand Today

- So What Should You Be Watching?

- Further Reading

The first three articles broke down the core structure of AI Agent systems:

- Model ≠ Runtime: Skills live in the Runtime, not the Model

- Five-layer architecture: Command → Agent → Tool + Skill → Context

- Context Engineering is the foundation: JIT loading, Token Budgets, Progressive Disclosure

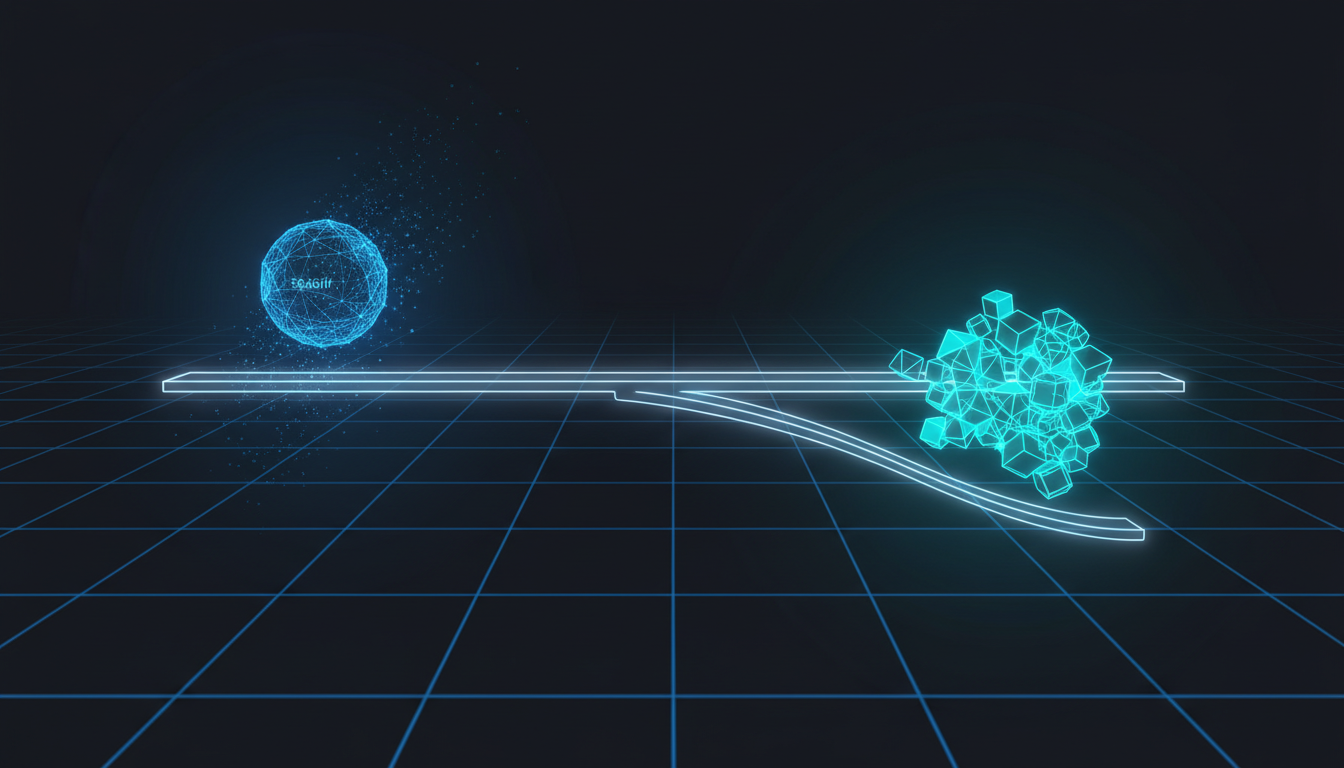

Put all of that together and you get a complete AI Agent Stack — and the shape of that Stack reveals exactly where the competitive battle in AI is heading.

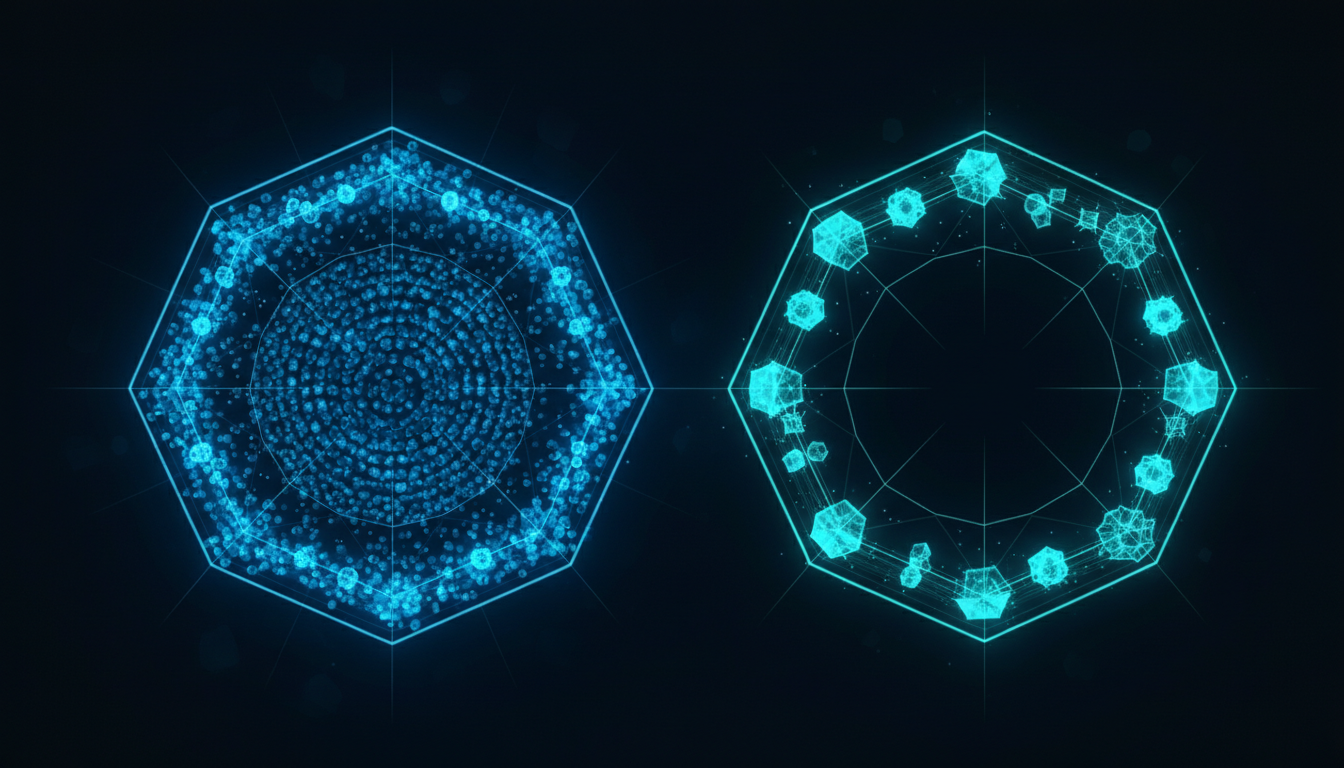

The Complete AI Agent Stack

Combine the “three-tier tech stack” from article one with the “five-layer internal architecture” from article two:

Claude Code · Cursor · Windsurf"] subgraph RUNTIME["Agent Runtime Layer

(skill loader · context injection · workflow)"] CMD["Command"] --> AGT["Agent"] AGT --> T["Tool"] AGT --> SK["Skill"] T --> CTX["Context

(managed by Context Engineering)"] SK --> CTX end MDL["Model Layer

Claude · GPT · Gemini

Pure reasoning engines"] APP --> RUNTIME RUNTIME --> MDL

Three things are worth noting:

First, all five layers live inside the Runtime. Command, Agent, Tool, Skill, Context — these are all internal structures of the Runtime. The Model has no awareness of any of them. It only ever sees the final assembled prompt that gets injected into its context.

March 31, 2026 Update: The Command layer is being merged into Skills. Conceptually it’s still a “user-triggered entry point,” but it’s no longer a separate mechanism. See this news coverage [1] for details.

Second, Context Engineering is the Runtime’s core job. The JIT loading, Token Budgets, and Progressive Disclosure from article three are all executed by the Runtime. The Model has no opinion about how you manage Context — it just processes the tokens it receives.

Third, the same Model can run on completely different Runtimes. Claude can run inside Claude Code, and it can run inside Cursor — but those two Runtimes have entirely different Skill Loaders, Context Injection logic, and Tool Systems.

This is exactly why the question from article one matters so much: whether a Skill can travel across platforms depends on the Runtime, not the Model.

MCP Solves Half the Problem

Within this Stack, the cross-platform problem for Tools already has an answer: MCP. MCP lets different Runtimes connect to external systems using a shared protocol. Whether you’re using Claude Code or Cursor, as long as it supports MCP, you can use the same Slack connector or the same GitHub connector. As we said in article two, MCP is essentially a subset of Tool, but what it solves is very specific: Tool Portability.

Tool portability → MCP (standard exists)

Skill portability → ??? (no standard yet)Tools are about “how to execute” — calling an API, reading or writing files, querying a database. These actions can be standardized because their interfaces are explicit: input → action → output. But Skills are about “how to think.” How do you standardize that?

Skill Portability: The Hard Part

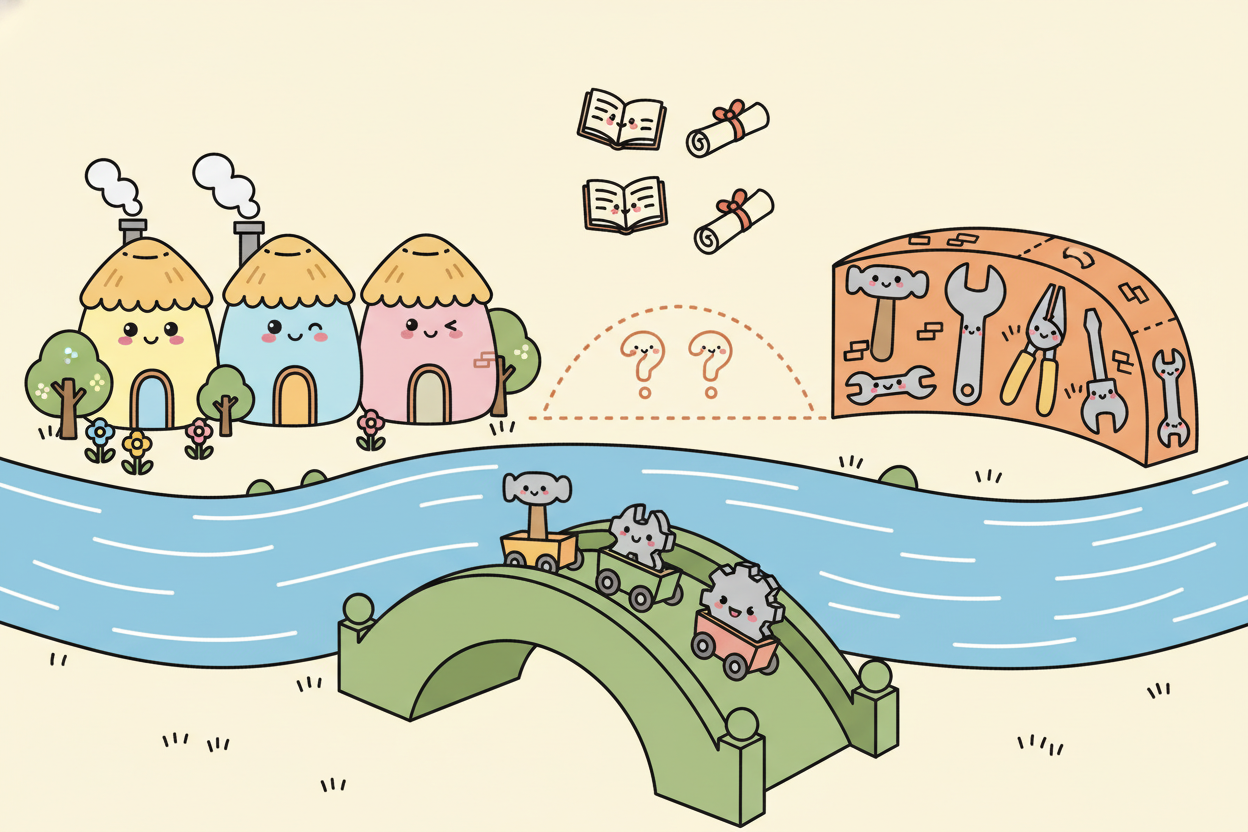

For a Skill to work across platforms, a Runtime needs to understand at least three things:

1. Where to find the Skill

- Where does a Skill live? In Claude Code it’s the

.claude/skills/directory. But Cursor has its own structure, and Windsurf has its own structure. Even “where does the Skill live?” is different for every Runtime.

2. How to load the Skill

- Article three discussed Progressive Disclosure: load the

SKILL.mdsummary first, then load subdirectory details only when needed. That loading logic is implemented by the Runtime. Different Runtimes have different Skill Loaders — the same Skill might be loaded in completely different ways, or might not be loaded at all.

3. How to coordinate with Tools

- A Skill doesn’t execute on its own. It provides knowledge that helps the Agent decide which Tools to call. But each Runtime has a different set of available Tools. A Skill that assumes the Agent can use

Bash()andWebSearch()will silently fail if the target Runtime doesn’t have those Tools.

Put those three problems together and you understand why a Skill you write in Claude Code does absolutely nothing when you move it to Cursor. It’s not that Claude doesn’t know how to use the Skill — it’s that Cursor’s Runtime doesn’t know how to process it.

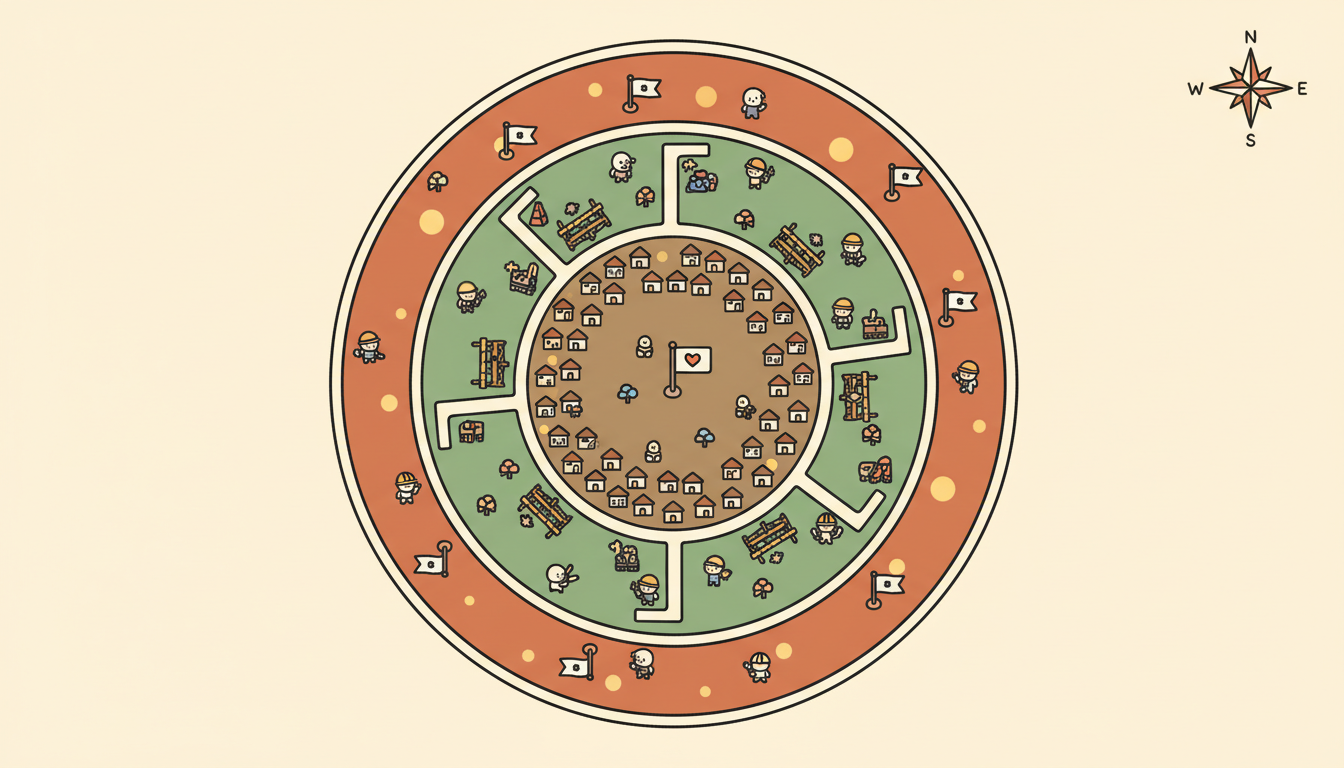

This Looks a Lot Like Early Mobile Apps

If you lived through the early smartphone era, this situation will feel familiar. In 2008, the iPhone launched the App Store, Android had Google Play, and Windows Phone had its own store.

The same app concept — say, a to-do list — had to be rewritten from scratch for every platform. Not because the logic was different, but because every platform had a different runtime, different APIs, and a different UI framework. We all know how that played out: the platform with the richest ecosystem won. Not because its hardware was best, but because the developers were there, the users were there, and the apps were there. AI Agents are walking the same road.

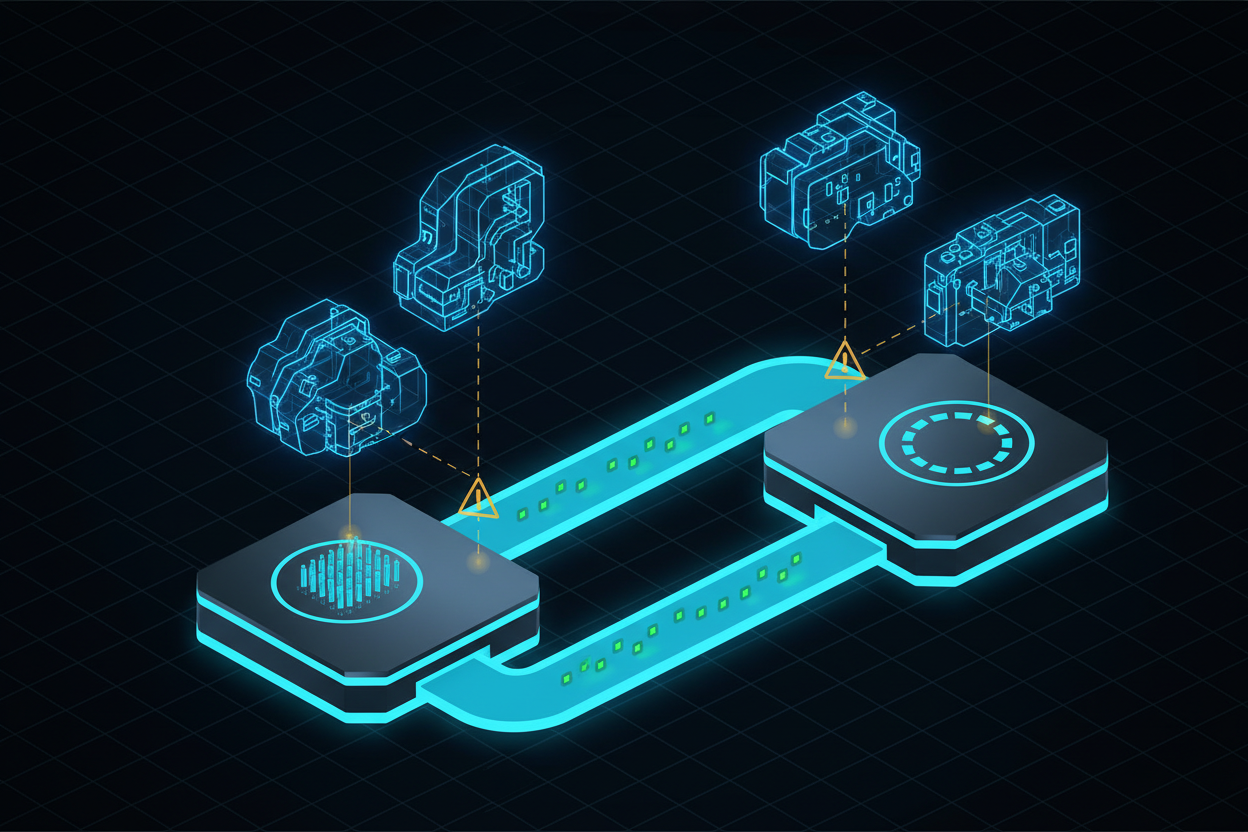

Three Levels of Competition

Breaking down the AI Agent Stack into its competitive layers, there are three levels:

Model Layer: Competing on Reasoning Capability

Claude vs GPT vs Gemini vs Llama. This is the layer getting the most attention right now. But if a Model is just a reasoning engine — the way a CPU is just a compute engine — then long term, Models will increasingly become commodities. Just like nobody picks a smartphone based on CPU brand today (well… maybe a few very hardware-obsessed engineers).

Runtime Layer: Competing on Developer Experience

Claude Code vs Cursor vs Copilot. The Runtime determines what developers experience day to day: how good the Tool System is, how well Context Management is handled, how flexible the Skill Loader is. Right now, the differences between Runtimes are far larger than the differences between Models — just like people use Gemini for images and video, use Claude for code, and use GPT for… its remarkably confident delivery?

Skill Layer: Competing on Knowledge Ecosystem

This is the earliest stage of development, but potentially the deepest impact. When Skills can be packaged, shared, and installed, the platform with the most high-quality Skills becomes the most valuable platform. Not because its Model is strongest, but because its Agent best understands what users actually need. A platform with comprehensive Legal Skills, Security Skills, and Finance Skills is more useful than one with a slightly better Model — because specialized knowledge is scarcer than general-purpose reasoning. Claude’s recently launched Cowork is doing exactly this.

Where Things Stand Today

- Model → Standardization: high (APIs converging) → Competition: intense but trending toward commodity

- Tool → Standardization: medium (MCP is gaining traction) → Competition: gradually consolidating

- Skill → Standardization: low (no cross-platform standard) → Competition: early, everyone doing their own thing

- Context Engineering → Standardization: very low → Competition: almost none now, but the open-source community is actively working on it

The Context Engineering principles from article three — JIT loading, Token Budgets, Progressive Disclosure — aren’t yet standard practice. Most teams are still competing on who has the longest prompt, whose RAG is most accurate, whose Model is newest. But what actually determines the quality of an Agent system isn’t how powerful the Model is — it’s how precisely the Context is managed.

So What Should You Be Watching?

If you’re building AI Agent systems, the conclusion across these four articles boils down to four statements:

1. The Model is the engine, not the system. Don’t put all your attention on the Model. Whether your Agent is actually useful depends 80% on the Runtime and Context Engineering — not the Model.

2. Architecture should be layered, but Context is the foundation. The five-layer architecture (Command → Agent → Tool + Skill → Context) gives you a clear separation of concerns. But if Context Engineering isn’t solid, every other layer degrades.

3. Skill is the next competitive battleground. Once MCP solves Tool portability, the next problem is Skill portability. Whoever builds the Skill ecosystem first holds the strategic high ground in the next round.

4. Build one good Agent before thinking about ecosystems. Anthropic put it well: start with a single Agent and good Context, get Context Engineering right — that’s more valuable than jumping straight to multi-Agent architectures.

The competition in AI is shifting from “whose Model is smartest” to “whose Skill ecosystem is richest.” Models will get stronger, cheaper, and more commoditized. But specialized knowledge won’t become a commodity — it will become a Skill, packaged, shared, and traded. So the most valuable AI platform of the future won’t necessarily be the one with the strongest Model. It will be the one that turns the most specialized knowledge into Skills, and gets those Skills into the hands of the most people. That’s the next chapter of AI Agents as I see it — and it may already be right around the corner.

Further Reading

-

Simon Willison: “Claude Skills are awesome, maybe a bigger deal than MCP” [2] — Willison argues Skills may matter more than MCP: “A skill is a Markdown file telling the model how to do something” link

-

Communications of the ACM: “The Commoditization of LLMs” [3] — “Low switching costs are a key factor supporting the commoditization of Large Language Models” link

-

Microsoft: “LLMs Are Becoming a Commodity — Now What?” [4] — Microsoft’s perspective on model commoditization and the shift in competitive dynamics link

-

Anthropic: “Effective Context Engineering for AI Agents” [5] — “The quality of every response is determined primarily by what the model is allowed to see in its context window” link

-

Skillport — gotalab (GitHub) [6] — “Bring Agent Skills to Any AI Agent and Coding Agent — via CLI or MCP. Manage once, serve anywhere” — the existence of this tool confirms the portability gap link

-

UC Berkeley (Gorilla): “Agent Marketplace” [7] — Academic perspective on agent marketplace dynamics and ecosystem competition link

References:

[1] News coverage: Claude Code merging Commands into Skills /en/news/claude-code-deprecate-command

[2] Simon Willison: “Claude Skills are awesome, maybe a bigger deal than MCP” https://simonwillison.net/2025/Oct/16/claude-skills/

[3] Communications of the ACM: “The Commoditization of LLMs” https://cacm.acm.org/blogcacm/the-commoditization-of-llms/

[4] Microsoft: “LLMs Are Becoming a Commodity — Now What?” https://www.microsoft.com/en-us/worklab/llms-are-becoming-a-commodity-now-what

[5] Anthropic: “Effective Context Engineering for AI Agents” https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents

[6] Skillport — gotalab (GitHub): Bring Agent Skills to Any AI Agent https://github.com/gotalab/skillport

[7] UC Berkeley (Gorilla): “Agent Marketplace” https://gorilla.cs.berkeley.edu/blogs/11_agent_marketplace.html

Support This Series

If these articles have been helpful, consider buying me a coffee