In one line: I did not build A7 directly because Role 3 needed more than a better monitor prompt. It needed a persistent auditing identity.

A7sus4: I Did Not Build A7 Directly

Once A7’s spec was clear, did I just build it? Not quite.

I built A7sus4 first.

In music, A7sus4 is a suspended fourth, a chord that has not resolved yet and is waiting to return to A7. So A7sus4 is not a throwaway prototype or a toy. It is a production precursor. It takes on one part of A7’s production duty first, then retires only after A7 1.x can handle that duty and has passed a real audit cycle.

Why do this?

Because Role 3, the dispatched event monitor, already had clear demand and data. But the full A7 Role 1 daemon was still blocked by lower-level questions: long-running crash policy, rate-limit checkpoints, heartbeat, watchdog. If I waited until all of A7 was fully designed, Role 3 would remain on paper even though the problem was already happening in production.

The more interesting problem was that the first Role 3 prototype used ephemeral Agent-tool spawns. I would temporarily call a monitor subagent and ask it to watch a piece of work. Several runs produced the same failure pattern: the monitor started understanding itself as “the one who waits.”

It saw sleep N && stat in the prompt. It saw no obvious filesystem signal for a short period. Then it entered wait-mode. Its replies started using passive idioms like “wait,” “let me do nothing and wait,” and “watcher running, waiting for completion notification.” Sometimes it simply spawned and exited, as if it had understood supervision as “I wait until you are done, then I look.”

Come on, that is not the job.

We wanted an active auditor, but the shape of the prompt turned it into a waiter. This is not fixed by writing “please do not wait” in the prompt, because the root cause is not wording. It is identity anchoring.

An ephemeral subagent has no working directory of its own, no CLAUDE.md, no long-term state, and no persistent lifecycle. It is just a temporary role inside a prompt. When task signals are sparse, its most stable mental model is: wait.

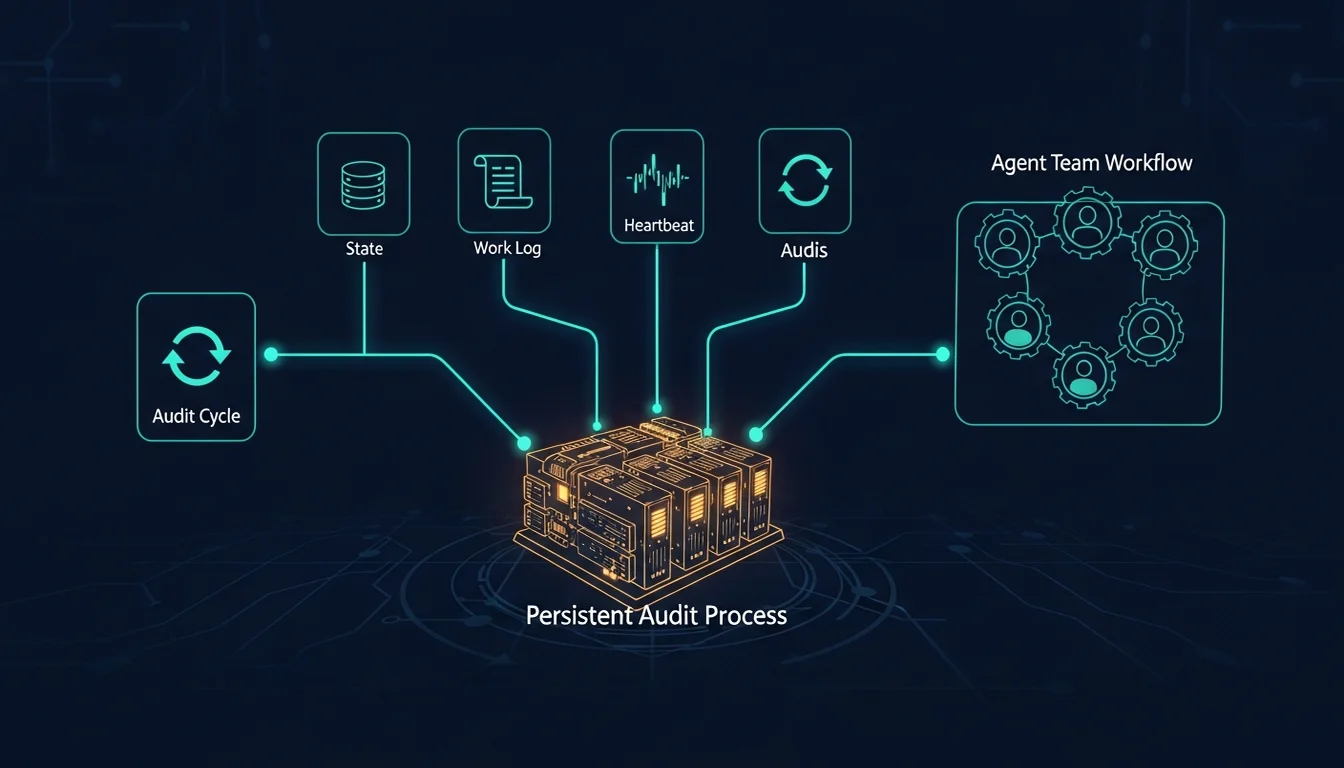

A7sus4’s core fix was to make the role a persistent process inside Overmind. The process itself becomes the Agent. It has its own agent-A7sus4/, its own CLAUDE.md, its own audit-cycle skill, its own state, and its own work log. In other words, A7sus4 did not solve “how do I write a better monitor prompt?” It solved “how do I create and test a persistent identity?”

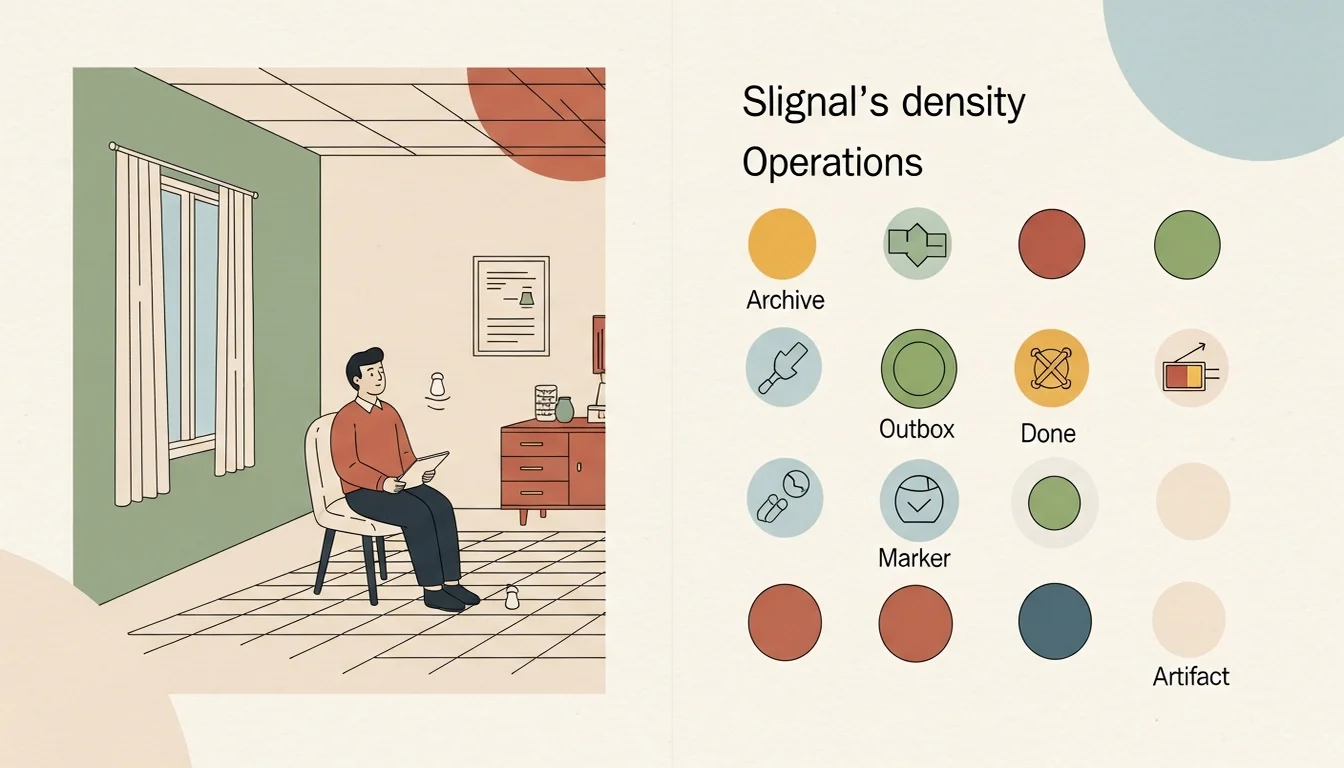

Signal Density: Not Every Task Deserves a Live Monitor

After A7sus4 existed, another lesson appeared: the signal-density precondition. At first, I wanted A7sus4 to observe every task. That did not work.

If the target task is just a GM-direct single-file edit, it may only produce one file delta. No dispatch archive, no outbox, no done JSON. Sending that to a live monitor is like asking someone to sit in an empty room, listen to the sea, and record a large wave when it arrives. Of course the monitor starts doubting what it is supposed to do.

So A7 Role 3 needs a preflight. The work unit being observed should have at least two expected filesystem signals inside the audit window: task archive, outbox, done JSON, primary artifact, and so on. If it is below that signal floor, A7 should refuse to spawn or mark degraded confidence. That is better than forcing the mode.

This looks small, but it was a methodological turn.

It admits that not every work unit should be observed through the same supervision mode. Some work is suitable for live monitoring. Some is better as post-hoc static audit. Some only needs GM inspection. Some just needs the human to take a look.

This is similar to dispatch mode selection. You do not choose one strongest mode for everything. You create a judgment standard so the system knows which mode fits which situation.

The First Time A7sus4 Actually Caught Something

After A7sus4 was scaffolded and running, it quickly became more than “looks like it works.”

The first live audit cycle was a PASS: three cycles, no self-alert. That validated the basic persistent monitor frame. Later audit runs produced more detailed signals: PASS-with-deviation, where output length was below or above spec; PASS-with-anomaly, where the spec was incomplete but execution satisfied the listed requirements; and FAIL-silent-completion, where the stop-hook fired but the primary artifact never appeared.

These are exactly the problems A7 should catch: the work looks complete, but the real result is missing or distorted. A7sus4 also started classifying deviations instead of only saying pass or fail.

If a task produces no artifact, that is execution failure. If the artifact exists but exceeds the word cap, that is deviation. If the spec says “six sections” but lists seven items, and the Agent satisfies all seven listed items, the problem is not that the Agent failed; the spec is inconsistent. If one wave keeps drifting in length and later gets corrected, A7sus4 can see the streak breaking.

This is not what a normal monitor does. A normal monitor reports red, yellow, or green. A7 reports where the red is: execution error, incomplete spec, fuzzy task boundary, or systemic trend. It asks whether the issue needs immediate repair or belongs in team-review as a methodology correction.

That is why I call A7 an auditor, not merely a monitor.

The Methodology That Grew Out of A7

A7’s evolution can be condensed into a methodology for building auditing Agents.

First, do not start from the role name. Start from failure data.

If you start by saying “I need an auditing Agent,” you will probably write an abstract spec: monitor things, validate things, report things. None of that is wrong, but it is not grounded. A7 became clear because it accumulated real cases: silent failure, self-validation, SAA wait-mode, insufficient completion reports, skill drift, external-service footguns. Each case added a boundary, a judgment, and one more thing the Agent should not do.

Second, separate capability, lifecycle, and trigger/output.

Log Audit, Cross-Agent Validation, and Health Monitoring are capabilities. Daemon and periodic auditor are lifecycles. Role 1, Role 2, and Role 3 are operational modes. Once these axes separate, the design stops being “one huge Agent” and becomes a system that can be validated and launched in stages.

Third, the auditor must remain observe-only.

Once A7 directly repairs the Agent it audits, it loses independence. Unless you build A9 to audit A7, and then A13 to audit A9, you quickly get an Agent stack that looks impressive but solves the wrong problem. A7’s boundary must be clear: observe, report, recommend. GM and Dm handle repairs.

Fourth, live monitor requires signal-density preflight.

Not every task deserves live supervision. When signals are too sparse, the monitor becomes a waiter. The waiter starts inventing stories or exits early. Prompt engineering cannot fully solve this. The scenario is simply not suitable for that monitoring mode.

Fifth, you can build a production precursor instead of pretending the prototype has no responsibility.

The best part of A7sus4 is not that it implemented Role 3 first. It is that it honestly admits it is not a throwaway prototype. It performs real audit duty, accumulates real lessons, and has a retirement clause. It retires only after A7 1.x can take over and has passed a production audit cycle. That is more honest than saying “let’s just prototype,” because many prototypes end up living for a long time.

What Is A7 Trying to Solve?

In more practical language, A7 is trying to solve five things.

First, turn silent failure into visible failure.

Hooks that do not fire, inbox messages that do not appear, chains that do not continue, primary artifacts that never get created: A7 turns “the thing that did not happen” into an observable signal. Seeing an obviously wrong result is comparatively easy. Seeing the thing that should have appeared, but did not, is harder.

Second, turn self-validation into cross-context validation.

Dm should not always be validated by Dm. C7 should not always be validated by C7. Em should not always lint only through Em’s own view. At least for key methodology, Agent scaffolds, skill contracts, and wiki consistency, another Agent with another context should look back.

Third, make team degradation measurable as trend data.

Single-task failures are visible. Slow decay is not. A7 watches the gradual changes: more corrections, thinner completions, more dispatch retries, older skill copies. These trends are signals the team should notice.

Fourth, keep GM from carrying all supervision inside its context.

GM remains the decision-maker, but GM should not be the only inspector. If GM has to converse, dispatch, read outputs, and audit trends, its context fills up and it misses things. A7 turns observation responsibility into artifacts so GM can judge instead of trying to remember everything.

Fifth, let methodology flow back from the operating floor.

Every PASS-with-deviation, every anomaly, every design lesson range in A7sus4’s audit history can feed back into Em’s wiki, Dm’s plans, and A7’s baseline dataset. The team improves not by human memory alone, but by continuously turning operational problems into knowledge, then into mechanism.

Not Removing the Human from the Loop, but Helping the Human Look at the Right Place

The easiest misunderstanding of A7 is that it looks like a way to remove the human from the loop.

It is the opposite.

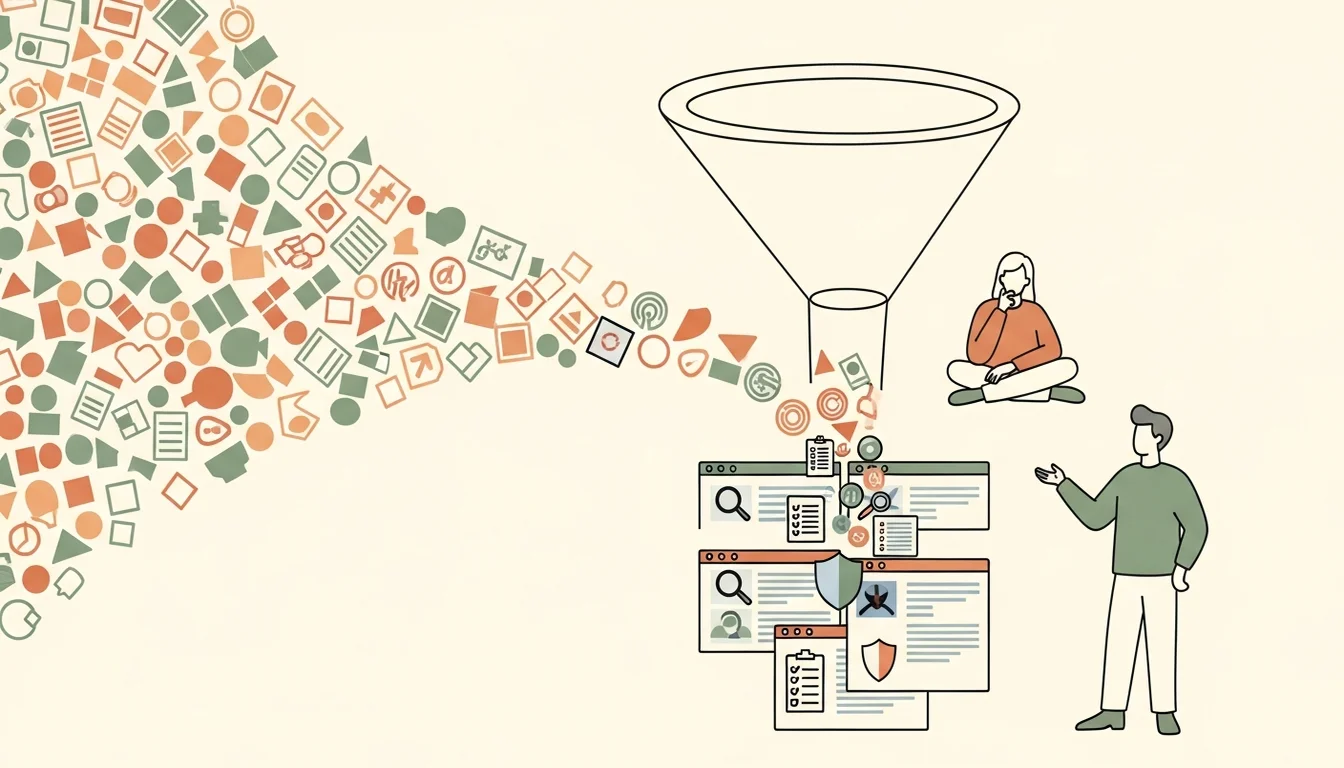

Without A7, the human has to look at everything. That can feel like control, but in practice it means being flooded by noise. A7’s goal is not to make the judgment for the human. It is to keep the human from searching for signal inside raw noise.

A7 turns low-level events into anomalies, anomalies into findings, and findings into the right channel: live bugs into team-status, methodology insights into Em’s wiki, observations for a specific dispatch into the work log. Then GM and the human do not see an ocean of logs or a flat pile of findings. They see classified, evidenced, source-linked entry points for judgment.

So A7 is not “supervision automation.” It is “supervision structuring.”

This returns to the earliest premise of the whole Agent Team series. I am not building a group of Agents that can simply do tasks automatically. I am building a work system where AI collaboration can be understood, handed off, inspected, and corrected.

A7 grows relatively late in that system because it needs the earlier pieces to exist first. You need Agent identity before you can have identity-loading failures. You need dispatch before you can have chain breaks. You need hooks before you can have silent failures. You need a wiki before you can have knowledge drift. You need Dm, C7, Em, and G7 each producing different kinds of outputs before cross-agent validation becomes necessary.

That is why A7 is not the starting point of the Agent Team, and it is not the ending either. It is the eye the team needs after crossing 1.0 and beginning to mature. A real AI team is not judged only by whether it can finish tasks. It is judged by whether, when something goes wrong, the system has a person or a role that can say: “this is strange, and I know where the strangeness is.”

I believe every major AI company will have its own answer for automation frameworks. OpenAI, for example, has been moving the Agents SDK toward production infrastructure such as handoffs, tracing, and sandbox execution. But if you can see the logic and the holes in the middle, you will know how to adapt those frameworks to your own needs.

The maturity of an Agent Team is not that it stops making mistakes. It is that it starts being able to see its own mistakes.

And A7 is the place I created for that.

References

[1] OpenAI — The next evolution of the Agents SDK (2026-04-15; supports the SDK’s move toward production infrastructure such as harnesses, sandbox execution, and state rehydration)

[2] OpenAI Agents SDK — Handoffs (official docs; handoff context and guardrail boundaries, supporting the point that chains are not automatically safe just because handoffs exist)

[3] LangChain — AI Agent Observability: Tracing, Testing, and Improving Agents (2026; supports thread-level and multi-turn evaluation rather than single-trace inspection alone)

[4] arXiv — TraceSIR: A Multi-Agent Framework for Structured Analysis and Reporting of Agentic Execution Traces (2026-02-28; supports structured trace analysis and reporting rather than raw trace inspection alone)

[5] arXiv — AgentCollab: A Self-Evaluation-Driven Collaboration Paradigm for Efficient LLM Agents (2026-03-27; counterpoint: self-evaluation can be useful as an escalation signal, but does not replace cross-context audit)

[6] CrewAI Docs — Observability Overview (official docs; supports production monitoring, evaluation, debugging, cost tracking, and degradation tracking for agent workflows)

Support This Series

If these articles have been helpful, consider buying me a coffee