In one line: A7 is not one more Agent. It is the role that lets an Agent Team start seeing its own mistakes.

The previous article ended with a question: if every Agent in the team is a heterogeneous specialist, and every output looks different, then a completion report cannot truly represent the result itself. So who decides whether the team is actually working?

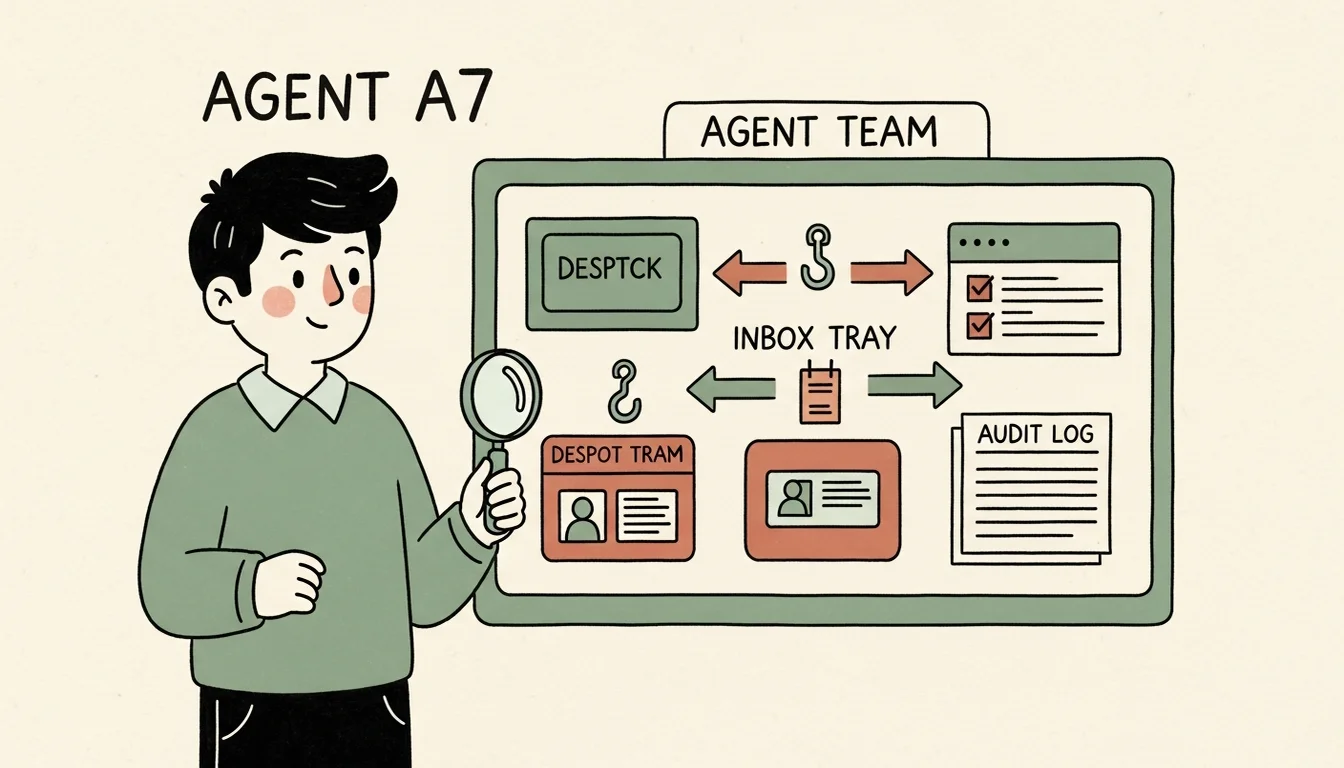

At first, I thought GM could do it. GM is the single human interface, the coordinator, and the center of the whole Agent Team. When Em finishes an ingest, GM can inspect the wiki. When Dm scaffolds a new Agent, GM can inspect the directory structure. When C7 provisions a skill, GM can inspect the catalog. That was the role I originally expected GM to play, and it matched my working style at the time: GM dispatches tasks, Agents execute in their own contexts, and GM comes back in a separate context to inspect the result. It fits the cross-audit principle nicely.

That principle says an Agent should ideally be audited by another Agent, but I kept wondering what an Auditing Agent for the Agent Team should look like. I had built Governance agents for ERP work and QA Agents for websites, and they each had very different shapes. So what should an Auditing Agent for an Agent Team be? I did not know yet. I used GM as the data collector first and watched how the team evolved.

After a while, patterns and pain points started appearing. GM can inspect a result, review a pipeline, and even do sense-making across several Agents. But GM cannot watch everything all the time. GM cannot automatically notice every hook silent failure the moment it happens. It cannot check the inbox five minutes after every dispatch. It cannot instantly tell whether a skill overwrite broke the catalog’s read-only contract. And it cannot constantly ask, as corrections accumulate across the team: is one of these Agents starting to degrade?

That looked like the moment an Auditing Agent should enter. But the problem was not just monitoring. It was this: when an Agent Team starts behaving like a team, which responsibilities should become independent instead of relying on GM and the human to notice them in passing?

A7 Started as a Validation Gap, Not a Monitor

When A7 first appeared, the name was not even clear yet. It was just a future validation agent concept in Em’s wiki, and the reason was simple: every Agent was validating itself.

Dm creates Agents, then Dm uses its own validator subagent to inspect the scaffold. C7 manages the skill catalog, then C7 runs /validate-skill on skills. Em manages the wiki, then Em runs /lint on the wiki. Everybody has validation. But from the cross-audit principle, the output and the validation standard are still inside the same context, so the Agent will naturally see its own output as matching its own standard. It was produced with that framework, then checked with that same framework.

This does not mean the Agent is lazy or unreliable. It is a structural limitation of the model. When you ask a model to check something it just reasoned through and generated, it tends to see consistency, not blind spots. The blind spot is exactly what caused the mistake. If the model could see it, it probably would not have generated the mistake in the first place.

So my first idea for A7 was simple: a dedicated L2 Agent for cross-agent validation.

I did not build it immediately. The team was still small. I was still the cross-auditor. GM’s context was also naturally separated from other Agents’ contexts. I did not need a whole Agent for this yet. I wanted to collect patterns and scenarios first, then design from the record.

“Record first, build later” mattered here. If I had created A7 at that moment, it probably would have become a very ordinary QA Agent: read the result, score it, write a report. That would look complete, but it would not solve the real problem.

A7 Took Shape Because of Incidents, Not Requirements

Once the Agent Team ran long enough, the problems became concrete.

The first category was silent failure.

In the dispatch article, I talked about several kinds of silent failure: hook format errors, launching from the wrong directory, stop-hooks that never wrote to the inbox, and so on. The task looks complete. The Agent replies. Files change. The session ends normally. Everything looks fine, except the follow-up event never happens. These errors are painful because they are not a red light. They are a light that never turned on, and you have to know that the light was supposed to turn on before you can notice the problem.

The second category was chain debug ownership.

When a pipeline runs Em -> C7 -> Dm, and Em completes but C7 is never triggered, whose fault is it? Em’s? C7’s? GM’s? The hook’s? The task file schema’s? Each Agent only sees its local slice. GM can investigate afterward, but GM was not designed to stand guard over every work chain. That created a zone nobody truly owned.

The third category was team degradation.

An Agent Team does not only break inside one task. It can decay slowly. Completion reports become thinner. Corrections pile up around one Agent. A local skill copy drifts farther from the catalog master. External service errors appear again and again, but not loudly enough to demand attention. This kind of operational drift does not feel like a bug at first.

Put together, these problems changed A7’s role from “validator” into “auditor.” It went beyond the scope of a normal monitor. A monitor sounds like something that watches processes and logs, then alerts when something turns red. That is part of A7, but not enough. I needed A7 to examine whether the Agent Team’s behavior had drifted away from the design intent. That requires AI judgment: did this dispatch actually complete? Did it complete according to the original intent? Is this deviation an execution defect, or is the spec incomplete? Does one Agent’s output have implications for another Agent? A shell script alone cannot answer those questions.

How I Split A7: From One Line to Three Axes

At first, I split A7 into a few functions the way a normal spec would: Log Audit, Cross-Agent Validation, Health Monitoring. I had recently touched all three, and they were not wrong. They just were not enough.

Going back to my three-layer methodology model, a method is not grounded until it has scenarios and playbooks. A function only answers “what does it look at?” It does not answer “when does it run?”, “who triggers it?”, or “where does the result go?” If those questions stay mixed together, A7 becomes a universal audit creature: it watches everything, reports everything, and dumps everything into one report until GM’s context is stuffed and humans stop reading.

So I split A7 into three axes.

The first axis is capability: what can it detect?

- Log Audit: inspect execution logs, hooks, dispatch, inbox, and outbox for operational problems.

- Cross-Agent Validation: use an independent context to check other Agents’ outputs, especially Dm, C7, and Em, where self-validation is tempting.

- Health Monitoring: watch degradation over time: correction trends, skill drift, completion report quality, dispatch volume spikes.

The second axis is lifecycle: how does it run?

- Long-running daemon: live inside Overmind, watch real-time signals, and catch chain breaks, hook failures, process crashes, and dispatch spikes.

- Periodic auditor: triggered by

/team-reviewor a fixed cadence for deeper statistical and trend audits.

The third axis is operational role, which became three roles:

- Role 1: Continuous Dynamic Detection watches live dispatch, inbox, and hook events, then outputs to a live operations queue.

- Role 2: Periodic / Manual Static Audit checks architecture and methodology artifacts, then outputs reports and wiki knowledge.

- Role 3: Dispatched Event Monitor is dispatched by GM or another Agent to observe one active work unit from the side, then outputs its own work log.

These axes mattered because they turned A7 from a vague “auditing Agent” into a system that could be decomposed, built in slices, and validated in slices.

The first methodological move in A7 was separating “what it observes” from “how it operates.” Otherwise capability, lifecycle, trigger, and output channel all collapse into one design. Every function looks reasonable on paper, but the whole thing will not run.

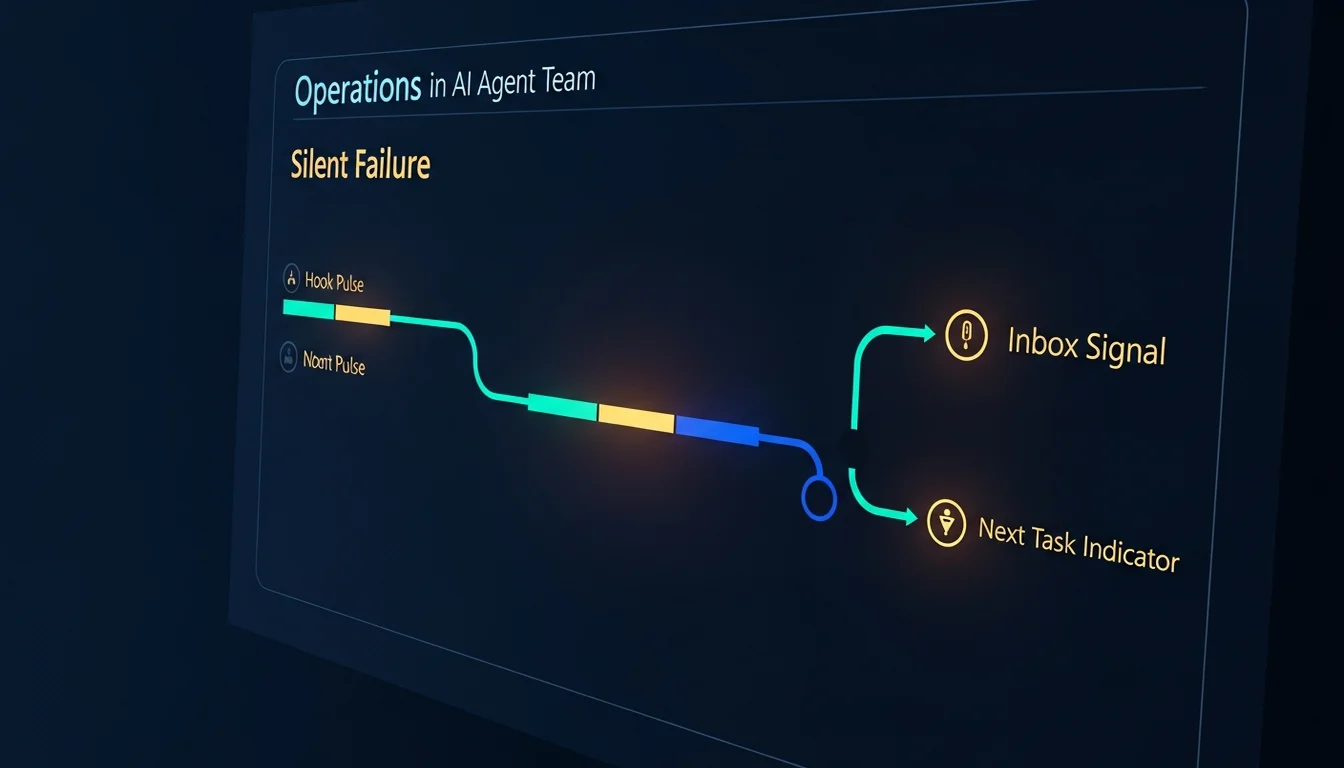

A7 Solves “Who Knows the Error Happened”

A7 is not primarily about preventing errors. It is about shortening the distance from error to knowledge, and from knowledge to mechanism. It also shortens the time from error to repair.

For a hook failure, A7 cannot guarantee hooks never break. But it can notice that a task was archived without a corresponding inbox JSON.

For a chain break, A7 cannot guarantee the next Agent will be triggered. But it can notice that the task specified a chain and no next task file appeared within the expected window.

For a skill override anti-pattern, A7 may not stop an Agent from editing SKILL.md. But it can notice an override fence appearing inside a protected read-only master area.

For an external-service footgun, A7 will not fix OAuth, refresh a token, or reconnect a Telegram bot. But it can notice repeated unauthorized, token expired, and refresh failed symptoms across multiple Agents’ stop-hook outputs, then promote that into a candidate issue.

If I rely on myself to catch these, I usually find them only after something breaks. Then I go back through logs, commits, timestamps, and guesses. A7 is designed to catch the signal when it happens and leave a trace. Even if I cannot fix it immediately, it can surface during /team-review.

That is why A7’s observe-only scope is simple: observe, report, recommend. It does not dispatch other Agents. It does not repair files. It does not modify other Agents’ outputs.

That sounds conservative, but the boundary is intentional. If A7 audits and fixes in the same context, it becomes another self-validation loop. The auditor and the actor share one context, and the auditor starts rationalizing its own changes. That is exactly the problem A7 was created to solve.

A7 is not the designer, the contractor, or the homeowner. It is closer to an external inspection team. It can point to water stains, wiring issues, and cracks in the ceiling. But whether to rewire, tear down the wall, or pause work is still up to GM and the human.

This is where this article stops: A7 begins as a validation gap and becomes an observe-only Auditing Agent. It is not here to make the team stop making mistakes. It is here to make errors visible, classify them, and help GM decide whether the next step is live repair, team-review, or methodology correction.

That leaves the next problem: if the full A7 spec is not ready yet, but Role 3 already has production pressure, should I wait for the complete A7 or build a production precursor first? The next article is about A7sus4.

References

[1] OpenAI — How we monitor internal coding agents for misalignment (2026-03-19; supports the need for independent monitoring infrastructure for coding agents)

[2] OpenAI Agents SDK — Tracing (official docs; built-in tracing for LLM generations, tool calls, handoffs, guardrails, and custom events)

[3] Microsoft Open Source Blog — Introducing the Agent Governance Toolkit (2026-04-02; supports governance and runtime security as separate concerns as agents gain autonomy)

[4] OWASP GenAI Security Project — OWASP Top 10 for Agentic Applications for 2026 (2025-12-09; supports agentic failure modes such as memory/context poisoning, insecure inter-agent communication, cascading failures, and rogue agents)

[5] arXiv — AgentTrace: A Structured Logging Framework for Agent System Observability (2026-02-07; supports continuous, introspectable trace capture for agent observability)

[6] arXiv — AgentHallu: Benchmarking Automated Hallucination Attribution of LLM-based Agents (2026-01-10; supports the claim that agent failure attribution is difficult and cannot be inferred from final output alone)

Support This Series

If these articles have been helpful, consider buying me a coffee