Contents

In one line: Persistence is not about keeping an Agent running forever. It is about letting it stop, move across environments, wake up, and still know who it is, where to find state, and how to return its work.

At 11:22 p.m., I gave Claude Code a /schedule command. I asked it to wake up a remote agent at 3:30 a.m. because my 5-hour quota would reset then, read Mitchell Hashimoto’s statement about Ghostty leaving GitHub, search for recent GitHub instability data, collect 10 to 15 sources, write a news draft, then push the research notes and draft to a branch so I could pull it down in the morning and review it.[1][2]

That sounds like an ordinary scheduled task. But it touches the core problem of the next stage of Agent Team: if an Agent is not in front of me, not in the same session, and maybe not even on the same machine, how do I make the work handoffable, traceable, and verifiable?

Until now, most of our Agent Team persistence discussion has been about memory persistence. The focus was the three-layer State, Wiki, and mem0 architecture: State records “what are we doing,” Wiki records “what do we know,” and mem0 records “why did we decide this.” That architecture solves the problem of “can an Agent recover context after changing sessions?” It is the first layer of persistence. But once you start scheduling remote agents to work overnight, running SDK-based agents in cloud environments, or letting A7sus4 perform live audits in Overmind, you hit the next problem: persistent memory does not imply persistent execution.[3]

Layer One: Schedule the Task, but Remember It Is Not Local

The first thing to understand about /schedule is that it is not a local cron job. /schedule is an Anthropic cloud routine running in a remote sandbox. It has its own git checkout, tools, and session context. It cannot see my local directory. It cannot see uncommitted and unpushed files on my machine. It also does not inherit the half-finished context from my conversation with GM.[1][2]

Some of that memory gap can be handled by the persistence design we discussed earlier, but recognizing the environment boundary matters. If you treat a remote agent as an extension of the local session, you will probably write broken prompts: “do what we just discussed,” “put it in that XXX folder,” or “follow the usual format.” To a remote agent, those prompts are unreadable magic. When it wakes up, there is no “just now,” no “usual,” and no “that folder.”

So the task prompt has to become a complete work specification: who you are, what this repo is, which sources to read, which research directions to search, where the research notes should go, where the draft should go, which documents define the writing style, how to commit, how to push, and what to report if it fails. In other words, remote agent persistence does not rely on session memory. It relies on a self-booting prompt, or a prompt that tells the Agent where to read the artifacts you have committed and pushed, and how to operate from there.

This is the same idea as Context Engineering from earlier articles, but in a different shape. A local Agent can reconstruct state from CLAUDE.md, wiki pages, mem0, and current context. A remote agent has to work through the prompt, git repo, and branch it returns. There is no shared filesystem between my machine and the remote sandbox. The one stable handoff surface is Git.

So the completion condition for a Remote Agent is not “write files locally.” It is “write files inside the remote sandbox, commit them to the right branch, push them back to GitHub, and let me git fetch and inspect the result in the morning.” That sounds like a detour, but the handoff surface is actually clean once we stop pretending remote and local agents are the same thing.

That is the first principle of execution persistence: when work crosses environments, do not take anything local for granted. Share artifacts.

Layer Two: Persist Identity, Not Just a Longer Prompt

Remote routines solve the problem of “wake up for this task at a future time.” They do not solve the problem of “let this role exist over time.” This is the distinction A7sus4 exposed. Temporary subagents easily understand themselves as waiters because they have no directory of their own, no CLAUDE.md, no state, no work log, and no continuous lifecycle. A7sus4’s fix was not a longer prompt. It was turning the role into a persistent process so identity itself had a place to continue. That gives us the second principle of persistence: if you want an Agent to carry a long-term responsibility, do not give it only a prompt. Give it identity, a working directory, state, and history.[6][7]

Layer Three: Not Everything Deserves Live Monitoring

The third principle also comes from A7sus4: not everything deserves live monitoring. As the previous article argued, low-signal tasks are bad candidates for live supervision. When there are not enough observation points, the Agent becomes a waiter and starts trying to interpret silence as meaning. A long-run system needs the same signal-density preflight. It should decide whether a task deserves live monitoring, post-hoc audit, GM inspection, or just a quick human look. Put simply: persistence is not constant watching. It is leaving the right observation at the right time scale.

Layer Four: Low Risk Can Be Automated, High Risk Needs Challenge

The fourth principle is the boundary of automation. It may sound abstract at first, but the idea is simple: decide how far automation should go. Low-risk self-maintenance can be automatic: wiki deduplication, skill drift detection, completion artifact checks, and recurring error-pattern consolidation. If these go wrong, they are usually easy to revert.

High-risk architecture evolution should not be fully automatic, at least not yet. Maybe it can be in six months or a year. But if a Remote Agent wants to change Agent boundaries, dispatch modes, Memory Extraction Rules, or audit standards, that should go through a human challenge or an independent cross-audit agent. You can let an Agent find you when judgment is needed. Do not pretend the judgment itself can already be fully delegated.

My Persistence Plan

If I collapse the current plan into one picture, it is not one system. It is four connected layers: memory persistence, task persistence, identity persistence, and observation persistence. Earlier Agent Team articles already covered parts of this. Now we can look at them together, because a long-run Agent Team does not become strong because one layer suddenly improves. It becomes strong when the four layers connect: when an Agent wakes up, it can find state; while it works, it has clear boundaries; when it finishes, it leaves artifacts; when something goes wrong, someone can see it; and when the next run starts, those signals can be loaded again.

The first layer is memory persistence. This is the State, Wiki, mem0, and QMD design from Article 29. State uses Git to record “where are we now.” Wiki uses Git to record “what do we know.” mem0 uses Qdrant Cloud to record “why did we decide this.” QMD lets the wiki become search-first instead of stuffing every page into context. The point is not making the Agent remember everything. The point is teaching it where to look: State for “now,” Wiki for “what,” mem0 for “why,” and QMD for “which related pages exist.”

But memory alone is not enough for long-run. If the Agent can recover information after waking up, that only means it has something to read. It does not mean the task can complete across time. It does not mean a remote execution environment knows how to return results. It also does not mean a long-term role naturally maintains its own identity. Memory persistence solves “what do I know when I come back.” Task persistence solves “what did I hand off when I left.”

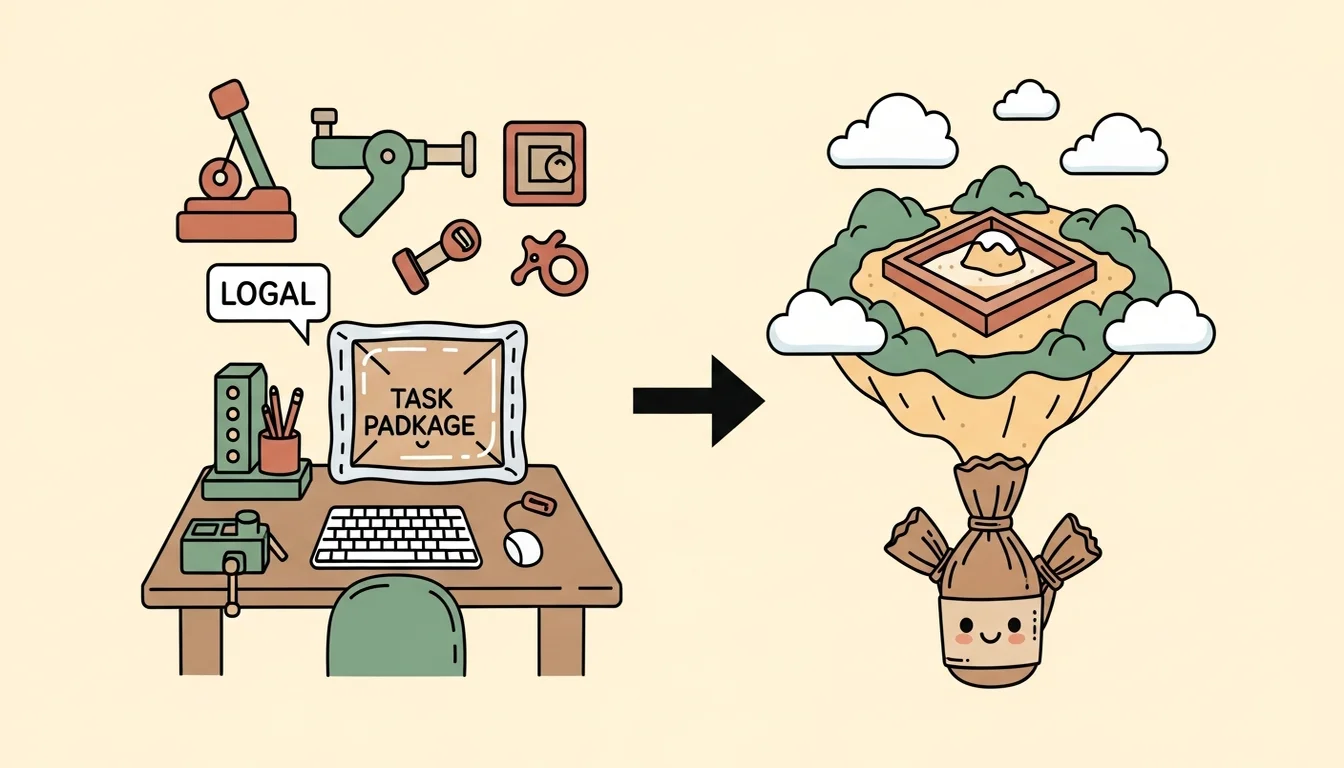

The second layer is task persistence. A local session hands work off through repo artifacts, hooks, inbox/outbox, and git diff. A remote routine hands work off through a self-contained prompt, an independent sandbox, a git branch, and a completion report. These cannot be treated as the same thing. In local work, the filesystem is shared. GM can dispatch Em to edit the wiki, C7 can provision a skill into an agent directory, and A7 can inspect outbox and task archives. A remote routine does not have that state. It starts from the world it can clone from the git repo, then sends results back through git push. When these Agents live in separate cloud environments, that boundary matters even more.

So the remote routine prompt has to be a small HANDOFF.md. It is not just “write me a news article.” It needs task background, goal, sources, output paths, writing style, acceptance criteria, commit/push flow, and failure reporting. That is not prompt-engineering fussiness. It is the basic condition of execution persistence. A remote agent that wakes up at 3:30 a.m. does not have “that conversation from earlier.” It only has the artifact you gave it when you scheduled the task.

This also changes how I see Git. Earlier, Git was mostly the synchronization mechanism for State and Wiki inside Agent Team. Now it becomes the recovery mechanism for remote execution. A branch is how a remote agent returns work to the local world. Commit history is audit material before human review. Whether push succeeds becomes the signal that the task actually crossed the environment boundary. For long-run systems, Git is not only version control. It is the interface across time and environment. That is why the earlier GitHub instability news produced so much resonance.

The third layer is identity persistence. If an Agent is only scheduled once, it can complete the job with a complete prompt. But if an Agent has to carry a long-term responsibility, it needs more than a prompt. It needs a place where identity lands. This is the A7sus4 lesson: when an Agent needs to monitor continuously, accumulate a work log, retain state, and adjust its audit cycle, it cannot be just a temporary subagent. It needs its own directory, CLAUDE.md, skills, state, logs, and lifecycle.

Right now I see two forms. One is scheduled identity: a remote routine that rebuilds identity from the prompt on every run. This works for one-off research, periodic scans, and fixed-format reports because the task can be fully specified in the prompt. The other is persistent identity: A7sus4-style identity that exists in a local process and repo artifacts. This fits work that needs trend accumulation, live observation, and history writing because the role itself needs to grow across multiple tasks.

Neither form is inherently more advanced. The question is which persistence cost is reasonable. Remote routines are nice because they do not occupy a local process. They run when the time comes. Their weakness is that every run has to rebuild the world from the prompt. Persistent processes keep identity and state continuous, but they force you to manage lifecycle, crash policy, heartbeat, rate limits, watchdogs, and other operational details. I do not choose one side globally. I let each form own the work it is good at.

The fourth layer is observation persistence. Once Agent Team becomes long-run, the most dangerous failure is not a single task failing. It is failure happening without being seen. A finding does not become knowledge. A hook does not fire. A branch does not push. An artifact never appears. Completion reports get thinner. Skill copies drift. None of these explode immediately, but they slowly move the whole Agent Team away from its design. Observation persistence is not about “never be wrong.” It is about “when something goes wrong, make it visible instead of letting it disappear silently.”

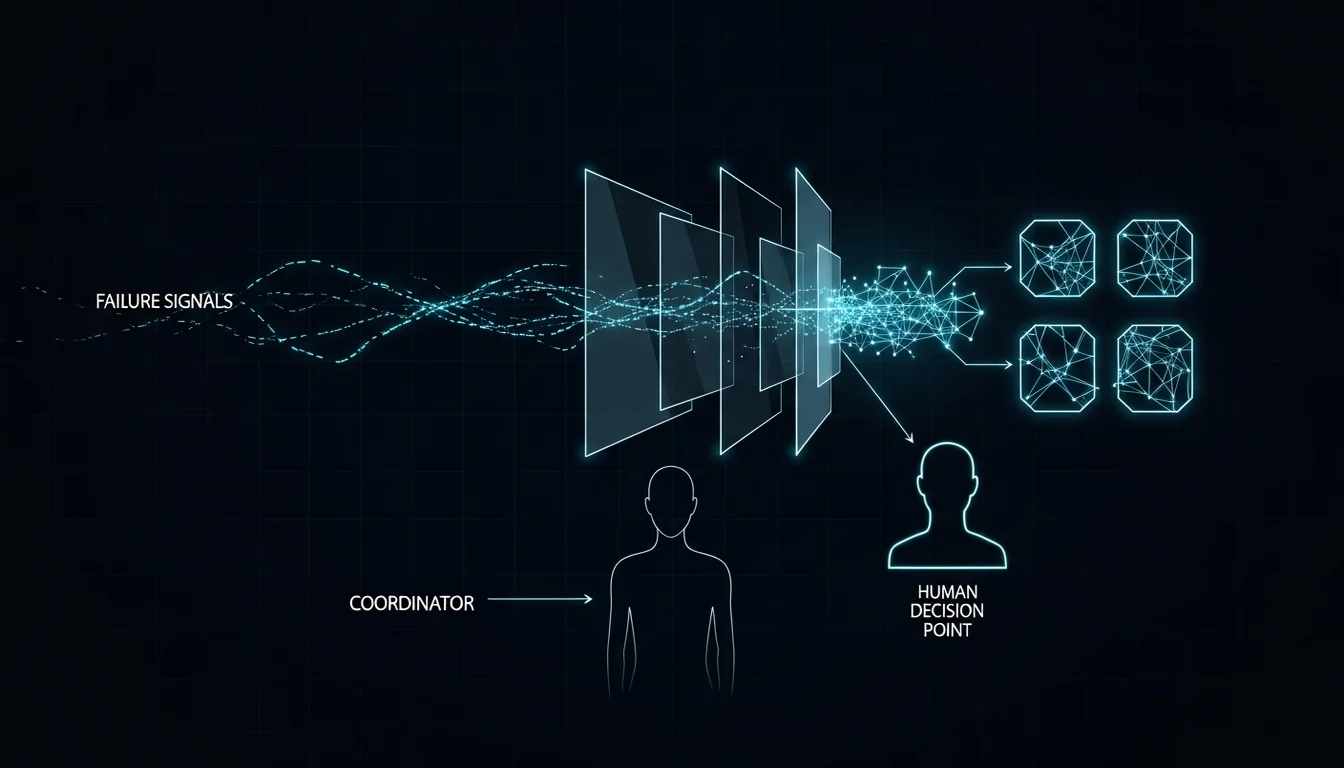

That is why A7’s observe-only scope matters here. It sees silent failures, chain breaks, skill drift, and team degradation, but it does not directly fix them. It reports them so correction returns to GM, Dm, and the human decision chain. A7 filters low-level event noise into findings and sends those findings to the right channel: live bugs to team-status, methodology insights to Em wiki, observations about a specific dispatch to the work log, and architecture-level issues to the next human review.[4][5]

When these four layers connect, Agent Team starts to resemble the long-run shape I want. Before sleep, I can use a remote routine for a clearly packaged research task and recover the work through a branch in the morning. Locally, I can use a persistent process to observe high-signal work units instead of pretending a temporary prompt can supervise everything. Execution results can become findings. Findings can flow back into wiki and design. Places that truly require judgment stop in front of GM and the human instead of hiding inside automation and continuing on their own.

The Point of Persistence Is Not Removing the Human

I know Agent Team persistence is easy to misunderstand as “make AI run forever without people.” That is not what I want. There are plenty of ways to make things run without a person staring at them. AWS, GCP, n8n, and Zeabur already provide all kinds of services for that. You do not need to reinvent the whole industry, ignore decades of operational practice, and imagine that inexperienced you plus AI will beat experienced teams plus AI.

What I want is a framework that keeps working, operating, and delivering even as these cloud services and environments shift. It should mean: when an Agent works outside my sight, its work still has boundaries; when AI continues across sessions, machines, and time, state is still recoverable; when AI fails, error information becomes a visible signal that can be discussed and written back into the system instead of disappearing into a log; and when AI reaches a place that truly requires judgment, it knows to stop and hand the problem back to GM and the human.

So persistence is not about taking the human out of the loop. It is about keeping the human out of the wrong loop.

I do not need to sit at my computer at 3:30 a.m. watching a remote agent research sources. I do need to wake up and see the branch, research files, draft, error notes, and failure reasons it left behind. I do not need GM to stuff every hook log into context. I do need A7 to turn real anomalies into findings. I do not need every Agent to remember everything. I need each Agent, when it wakes up, to know where to find the right state, knowledge, and decision rationale.

Maybe this is what we keep getting wrong about Agent Team persistence: what I want is not for AI to never stop. I want AI to be summonable after it stops, with enough identity and artifacts to return to work.

A truly long-run Agent Team is not an always-open session. It is a set of identities, memories, artifacts, audits, and decision channels that still exist after the session disappears.

That is what I am actually trying to build.

References

[1] Claude Code Docs — Run prompts on a schedule: session-scoped scheduled tasks, cloud routines, fresh clone behavior, and local-file access boundaries. https://code.claude.com/docs/en/scheduled-tasks

[2] Claude Code Docs — Automate work with routines: routines as packaged prompts, repositories, connectors, and triggers running on Anthropic-managed cloud infrastructure. https://code.claude.com/docs/en/web-scheduled-tasks

[3] OpenAI — The next evolution of the Agents SDK: agent harness, native sandbox execution, manifests, and production infrastructure for long-running tasks. https://openai.com/index/the-next-evolution-of-the-agents-sdk/

[4] OpenAI Agents SDK — Tracing: traces/spans, tool calls, handoffs, guardrails, and custom events during agent runs. https://openai.github.io/openai-agents-python/tracing/

[5] LangChain — AI Agent Observability: Tracing, Testing, and Improving Agents: production agent observability, tracing, testing, and continuous improvement. https://www.langchain.com/articles/agent-observability

[6] Springdrift: An Auditable Persistent Runtime for LLM Agents with Case-Based Memory, Normative Safety, and Ambient Self-Perception: a research case for long-lived agent runtimes, auditable substrates, git-backed recovery, and persistent operation. https://arxiv.org/abs/2604.04660

[7] On the Use of Agentic Coding Manifests: An Empirical Study of Claude Code: how CLAUDE.md and agentic manifests provide project context, identity, and operational rules.

https://arxiv.org/abs/2509.14744

Support This Series

If these articles have been helpful, consider buying me a coffee