A few days ago I was chatting with a friend who has no engineering background. He was buzzing with excitement telling me about an automation tool he’d built with AI — end to end, from requirements to deployment, all done with AI. “I know how to build things with AI now,” he said. His eyes were lit up when he said it. And my first instinct was: are you sure about that?

Then I immediately realized that instinct had stepped right on something we’ve always tried so hard to avoid: “professional arrogance.”

Professional Arrogance: When Experience Becomes a Framework

There’s something unique about being an engineer. We’ve spent years understanding things most people never need to understand — data structures, system architecture, edge cases, error handling. You don’t get this from reading an article, or having AI summarize something for you. It comes from countless nights debugging until 3 AM, from being woken up by production incidents, from slowly building up intuition over time. That intuition makes us very good at sensing when something “isn’t quite right.” But it also makes us quick to reject things that “fall outside our own framework.”

This isn’t new. Long before AI came along, we already had the term “professional arrogance.” Engineers thought PMs didn’t understand the tech, so their requirements were too far from reality — impractical. Designers didn’t understand performance, so their designs were too idealistic. Executives didn’t understand architecture, so their decisions were too hasty. Sometimes those judgments were right — but more often, they also cost us the chance to see problems from a different angle.

In the AI era, this arrogance has taken on a new form: engineers don’t trust AI.

One survey found that 96% of engineers don’t fully trust AI output, but only 48% actually verify it [1]. That gap is interesting. If you don’t trust it but don’t bother verifying it, it means a lot of engineers’ attitude is “I know you’re unreliable, so I’ll just skip you or cherry-pick” — rather than “let me use it and actually check whether you’re right.”

METR (an organization focused on evaluating AI capabilities) published a study in July 2025 [2] where they recruited experienced open-source developers and had them work on real development tasks with and without AI assistance. The results surprised a lot of people: senior developers using AI tools saw their productivity drop by 19%.

19%. Not flat. Down.

The researchers’ explanation: these experienced developers spent so much time reviewing, revising, and second-guessing AI suggestions that it completely negated the efficiency gains. Their expertise let them spot problems in AI recommendations (which is good), but it also made them spend time re-verifying things even when the AI had already gotten it right (which is arrogance — though I know engineers are thinking: how else would I know it was right if I don’t verify?).

A joint study by BCG and Harvard [3] found a similar pattern: in creative tasks, consultants using GPT-4 performed 40% better than those who didn’t. But experts were slower to adopt AI suggestions, and more likely to stick with their own judgment even when the AI was correct.

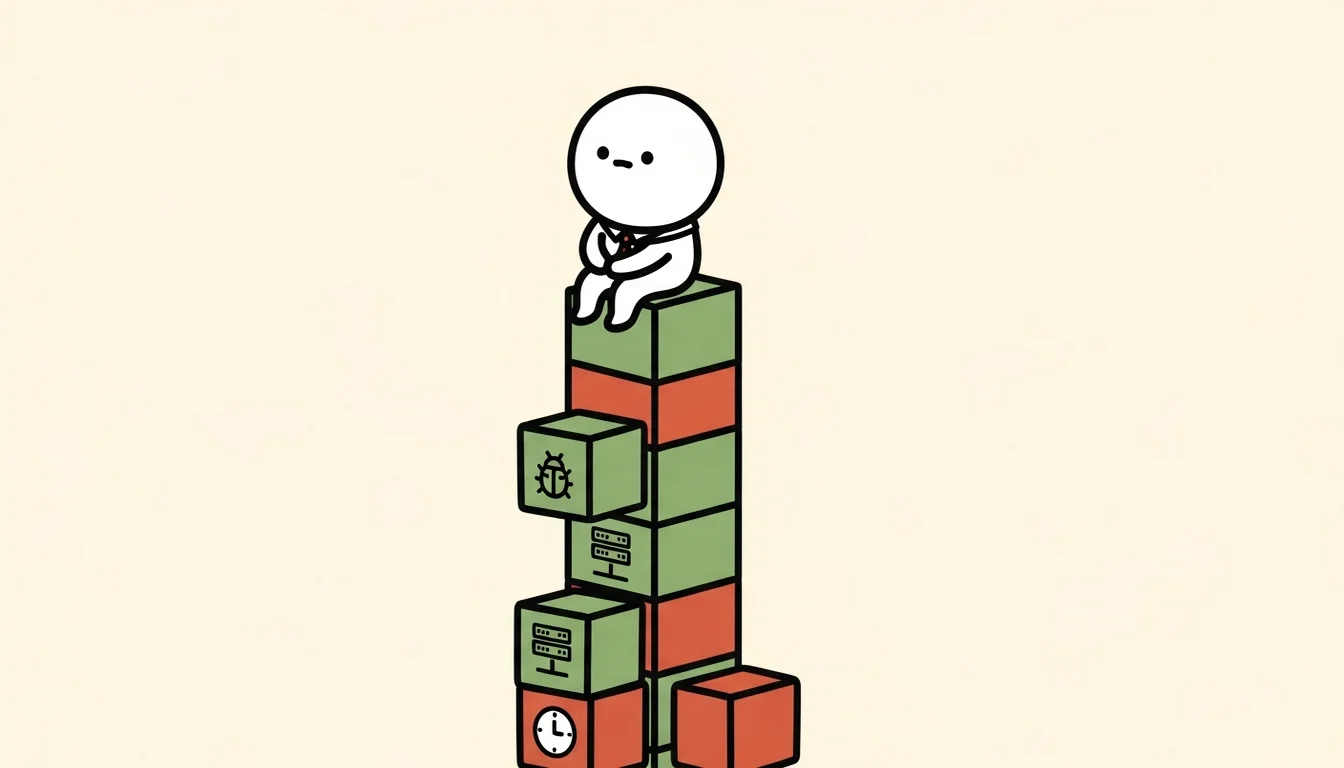

There’s a subtle point here. In earlier articles I’ve written about how Agents make assumptions in places you can’t see, how Agents miss details when reading with summarization, and how the Agent “already knows” trap causes it to omit critical information. These are real problems. They tell us that engineering wariness has a legitimate foundation.

So the problem isn’t wariness itself — it’s when wariness turns into a default refusal.

I’ve made this mistake myself. When using an AI Agent for architecture decisions, I’d habitually form a solution, framework, or theory in my head first, then ask the AI — but in a way that was really just validating and confirming what I’d already decided, not genuinely opening things up for it to survey all the possibilities. It’s like a doctor who’s already settled on a diagnosis before the appointment, and runs tests only as a formality.

So sometimes I wonder: what if I’d let the AI freely search, research, and propose approaches I hadn’t considered? The answer is: it would have been different. In later projects, I tried letting AI explore my ideas and proposals without my preconceptions, and it did surface interesting approaches that needed validation and refinement. But because AI isn’t constrained by my experience framework, it found paths I’d overlooked — precisely because I was too familiar with certain domains. That opened up more possibilities than I would have found on my own.

Derek Neighbors used a pointed phrase in a 2025 article [4]: “skill issue.” He argued that engineers who refuse to use AI aren’t “too senior” — they’re missing a new skill: the skill of collaborating with AI. That skill requires practice just like writing code does, and refusing to practice is itself a form of arrogance.

But I want to offer an important balance here, and some reassurance to engineers: the expert-plus-AI combination is still, as of now, the best performing combination.

Kasparov’s “Centaur” concept from chess still holds. Expert plus AI beats pure AI, and beats novice plus AI. Radiologists working with AI achieve diagnostic accuracy that exceeds either alone. So engineering expertise is not the problem in the AI era. The problem is when that expertise becomes a wall that blocks out AI’s possibilities.

Maybe the hardest part isn’t admitting that AI is faster than you at certain things. It’s admitting that you may never have given it the chance to show you.

In the next article, let’s look at the other side of that wall: what happens when non-professionals mistake AI’s capabilities for their own.

References

[1] “96% Engineers Don’t Fully Trust AI Output, Yet Only 48% Verify It” — Engineering Leadership Newsletter, 2025. https://newsletter.eng-leadership.com/p/96-engineers-dont-fully-trust-ai

[2] “Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity” — METR, 2025/07. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

[3] “Navigating the Jagged Technological Frontier” — BCG x Harvard Business School, 2023. https://www.hbs.edu/ris/Publication%20Files/24-013_d9b45b68-9e74-42d6-a1c6-c72fb70c7571.pdf

[4] “The Skill Issue: Why Engineers Who Dismiss AI Won’t Make It” — Derek Neighbors, 2025/06. https://www.derekneighbors.com/2025/06/11/the-skill-issue-why-engineers-who-dismiss-ai-wont-make-it

Support This Series

If these articles have been helpful, consider buying me a coffee