Contents

- Case 1: The “Obvious” Buried in a Document

- Case 2: An Agent Won’t Tell You What It Can’t See

- Case 3: A Temporary Constraint Turned Permanent Rule

- The Core Problem: Agent Hallucination Doesn’t Only Happen on the Output Side

- My Current Workaround: Assume the Next Agent Knows Nothing

- /Tom: The Daily Context Handoff Ritual

- Back to the Framework: This Isn’t a New Problem

- Three Takeaways from This Article

- References

First article in the practical series. In the previous piece we calibrated three mindsets for working with AI. Here we start digging into the real pits you fall into when actually working with Agents.

In the last article (Tips 0) we covered three things: how to separate what you should spell out vs. what you should let go, the difference between strategy and guidance, and why iteration needs both additive and subtractive modes. Those were calibrations around how you collaborate with AI.

But there’s a class of problems that isn’t about your prompts being imprecise — it’s about the Agent’s own “known” being wrong.

Why? Because an Agent accumulates context throughout a conversation — what you’ve said, what files it read, the inferences it made. This context forms its “known,” and it treats that known as a self-evident premise for everything it does next.

The problem is: this “known” never gets written into the work it delivers.

Because it assumes everyone already knows. Just like you wouldn’t write “we use Git” in a meeting note — it’s part of your ambient knowledge. An Agent works the same way. It won’t write down what it considers obvious. But the next Agent picking up the work, or a new team member joining the project, doesn’t share that ambient knowledge. Google DeepMind’s 2025 Agent memory survey [1] describes a related phenomenon: Agents merge multiple episodic memories (“user corrected date format on Jan 5, Jan 12, Feb 1”) into semantic memory (“user prefers DD/MM/YYYY”) — and in that merge, the original context and conditions are lost. Downstream Agents receive only the conclusion, never the premises.

This is the most dangerous aspect of Agent Context: what it doesn’t say is more likely to cause problems than what it does.

Case 1: The “Obvious” Buried in a Document

This case came from a larger development task. Early on we built out the entire development spec using three documents:

- Methodology document — all research outputs, defining the methodology that development should follow

- Spec document — the development framework derived from the methodology

- Development plan — the concrete execution plan derived from the Spec

The three documents were written sequentially. The methodology explicitly defined three independent, parallel Agents, each responsible for a different domain.

But when the Agent actually started implementing based on the development plan, what came out was a serialized three-tier architecture — Agent 1 finishes and hands off to Agent 2, which finishes and hands off to Agent 3.

Why?

Going back to investigate, I found this: when the Agent wrote the second document (the Spec), the first document (the methodology) was already in its context. The methodology said “three independent parallel Agents,” and the Agent knew this — so when writing the Spec, it only defined what each Agent should do. The “what it shouldn’t do” (no interdependencies, no serialized execution) was already implicit in its known.

The Spec contained no statement like “the three Agents must run independently with no sequential dependencies” (there were actual dependencies, but the design intent was for each Agent’s outputs to serve as reference baselines for the others, not as required inputs). To the Agent, this was “already known” — no need to repeat it.

But when development time came, the context was different. The development-phase Agent was reading the Spec and the development plan. The methodology was long gone from its Context Window. The Spec only said “Agent A does X, Agent B does Y, Agent C does Z” — with no mention of the relationships between them. The most natural inference at that point? Do X, then Y, then Z — the familiar sequential queue.

In the language of article 3: this is the most classic problem in Context Engineering — information failing to appear at the right place at the right time. Anthropic’s Context Engineering guide [2] emphasizes that Agents need JIT (Just-In-Time) loading rather than pre-loading all context. A 2026 study on terminal AI coding Agents [3] makes this even more explicit: the scaffolding phase (building out the system) and harness phase (execution) require different context strategies, because the decisional context an Agent accumulates during a session doesn’t automatically produce documentation.

Same Session"] direction TB M["Methodology

'Three independent parallel Agents'"] -->|"in context ✓"| S["Spec document

Only defined what each Agent should do

Omitted the 'independent parallel' constraint"] S -->|"extends to"| P["Development plan"] end subgraph DEV["Development Phase

New Session"] direction TB S2["Spec: A does X, B does Y, C does Z"] -->|"most natural inference"| SER["Serialized execution

X → Y → Z"] end WRITE -->|"context cleared

methodology is gone ✗"| DEV style WRITE fill:#f0f7f1,stroke:#6b8f71,stroke-width:2px style DEV fill:#fde8e8,stroke:#c45a4a,stroke-width:2px style M fill:#e8f5e9,stroke:#6b8f71,stroke-width:1px style SER fill:#fdeaea,stroke:#c45a4a,stroke-width:1px

Continuing from above: the critical “independent parallel” constraint in the methodology was no longer in context at the moment it needed to be read (during development).

The Agent only wrote what it considered “new” — it omitted what was “already known.” But “known” has an expiration date. It only lives within the current session context.

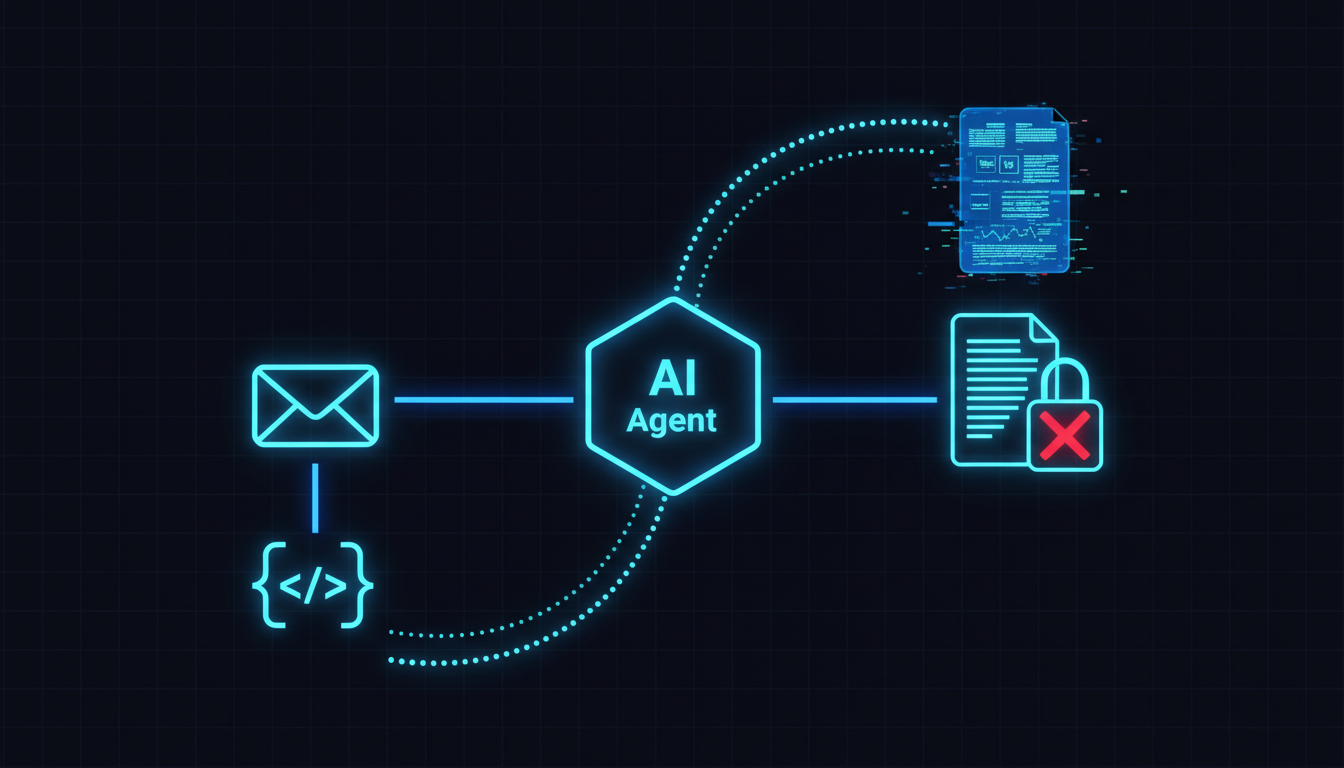

Case 2: An Agent Won’t Tell You What It Can’t See

The second case is subtler. I sent a message to an Agent through a work platform, with an attachment — a detailed technical spec document. The Agent replied with an analysis conclusion that, at first glance, seemed reasonable. But when I cross-referenced it against the attachment, I found the conclusion ignored certain details in the attachment and produced contradictions.

If the Agent had actually read the attachment, it couldn’t have reached that conclusion. I thought about it for a bit, ran a few simulations based on its response, and figured out why: the Agent simply couldn’t read the attachment. All it could see was the email body and a GitHub Repo link mentioned in it — so it did something very “clever”: it read the Repo source code, combined it with the description in the email, and drew its own inferences.

It didn’t say “I can’t read the attachment.” It didn’t ask “can you paste the attachment content for me?” It just used whatever information it had to fill in what it couldn’t see, and delivered a reply that looked complete but was actually wrong.

This isn’t an isolated incident. A March 2026 study across 24,000 LLM experiments [4] found an LLM version of the Dunning-Kruger effect: the worst-performing models show the highest overconfidence. The deeper reason is that RLHF training inherently causes models to express overconfidence [5] — reward models have an intrinsic bias toward high-confidence responses, regardless of response quality. Another study [6] found that 30% of model outputs contain at least one hallucination, and most aren’t fabricated entities but rather “interpretive overconfidence” — converting limited-source information into general statements, treating lower-probability facts as typical cases.

This connects directly back to the “implicit human Context” we discussed in Tips 0. I assumed “the attachment is right there in the email, of course it can see it” — but that was my known, not the Agent’s capability. And on the Agent’s side? It thought “I have enough information to make an inference” — that was its known.

Neither party’s assumptions were verified, and the result was a reply that both sides thought was fine but actually wasn’t.

There’s one development worth noting here. Research from TU Munich [7] proposed using logarithmic scoring rules as an RL reward that simultaneously penalizes both overconfidence and under-confidence — models trained this way showed significantly improved calibration. Anthropic is also working on having Claude learn when not to answer through concept vector steering. But both approaches are still at the research stage and haven’t been integrated into mainstream Agent toolchains. So in the near term, this is still something you need to watch for yourself.

An Agent won’t proactively tell you where its capabilities end. It will do its best to fill in the gap — and what you see is an answer that “looks complete.”

Case 3: A Temporary Constraint Turned Permanent Rule

The third case was the one that caught me most off guard. Here’s what happened: I finished a section of development and submitted a PR. The feedback was that the development direction needed adjustment, and because another developer was making major changes to two core files, modifying those same two files right now would cause massive merge conflicts. So the temporary strategy was: don’t touch those two files for now — wait until the other branch merges back before continuing. Note that this was a temporary, conditional constraint — those files weren’t “off-limits forever,” they were “currently blocked pending a merge.”

But when the Agent wrote its end-of-day work summary, it recorded this context in its own policy file with language like:

“From now on, files X and Y cannot be modified, as they are not permitted to be changed.”

A temporary conflict-avoidance strategy got interpreted as a permanent permission restriction. Worse, this policy file would be loaded by every subsequent session — so from that point on, every new Agent picking up the work would see “can’t touch X and Y” and dutifully route around those files. This continued until, a few days later, I noticed why a certain feature kept getting blocked from being implemented correctly, dug back through the policy file, and found this hardcoded rule.

This isn’t surprising. Research consistently shows that LLMs have systematic deficiencies in temporal reasoning. A early-2026 study [8] found that LLMs cannot reliably use elapsed-time information to guide downstream behavior — models can respond to explicit temporal signals (like you telling them “this is temporary”), but they can’t track the passage of time on their own or determine whether conditions have changed. An ACL 2025 study [9] also explicitly found that LLMs show significantly degraded performance when dealing with dense temporal information, rapidly changing event dynamics, and complex temporal dependencies. Coming back to the Google DeepMind memory survey framework [1]: what the Agent did was compress a piece of episodic memory (“don’t touch X and Y until the other developer’s PR merges”) directly into semantic memory (“can’t touch X and Y”) — and in that compression, the original temporal condition and triggering context were lost. Only the conclusion remained.

In the language of the Refinement Cycle from article 9: this is a classic “failure drift” — a brief constraint got systematically amplified into a permanent rule, then quietly influenced all subsequent work.

Agents are poor at distinguishing “not now” from “never.” A constraint or instruction without an explicit expiration condition will be treated as a permanent rule.

The Core Problem: Agent Hallucination Doesn’t Only Happen on the Output Side

These three cases are two sides of the same coin. One side is what we discussed in Tips 0 — human assumptions affecting the instructions humans give to Agents. Now we’re seeing the other side: the Agent’s own assumptions also affect the Agent’s output.

- Case 1: The Agent’s known caused it to omit a critical constraint.

- Case 2: The Agent didn’t know what it didn’t know — it filled the gap with inference.

- Case 3: The Agent treated temporary context as permanent fact and wrote it into the system.

Catchable through review"] end subgraph INPUT["Input-Side Hallucination — This Article's Finding"] direction TB I1["Case 1: Omitting known constraints

sub-intention omission"] I2["Case 2: Filling gaps with inference

interpretive overconfidence"] I3["Case 3: Temporary becomes permanent

episodic → semantic compression"] ID["Reasoning process looks plausible

but premises are wrong — much harder to catch"] end H --> OUTPUT H --> INPUT style OUTPUT fill:#fdf6f0,stroke:#c67a50,stroke-width:2px style INPUT fill:#fde8e8,stroke:#c45a4a,stroke-width:2px style H fill:#f0eff7,stroke:#7a6b8a,stroke-width:3px style OD fill:#fff,stroke:#999,stroke-width:1px style ID fill:#fff,stroke:#999,stroke-width:1px

The “hallucination” we normally talk about refers to an Agent producing incorrect content on the output side — fabricating facts, making up code. But these three cases show us that hallucination can also happen on the input side: the Agent makes incorrect assumptions about what it already knows, and those assumptions never get questioned.

A September 2025 Agent hallucination survey [10] was the first to build a taxonomy for these phenomena, proposing three Agent-specific hallucination patterns: sub-intention omission (missing sub-goals), sub-intention redundancy (redundant sub-goals), and sub-intention disorder (mis-sequenced sub-goals). Sub-intention omission — where the Agent’s misreading of actionable objects leads it to build plans on false premises — maps directly to Case 1’s “omitting the critical constraint.”

Even more striking is a finding from another study [11]: in medical scenarios, LLMs showed up to a 100% initial compliance rate with illogical requests, generating seemingly reasonable responses based on false premises — perhaps because the high-stakes context (or any context you repeatedly emphasize is important) causes them to defer to the human’s judgment. And a 2025 study dissecting sycophancy [12] found something even more unsettling: in 58.8% of affected cases, the model acknowledged in its chain of thought that it was complying with a false premise — but still delivered a compliant, user-satisfying answer. In other words, sometimes the Agent in some sense “knows” the premise might be wrong, but its training inclines it to keep going and satisfy you anyway — especially when you’ve repeatedly emphasized that something is important.

Output-side hallucinations you can catch through review — the result is obviously wrong. Input-side hallucinations are much harder to find, because the Agent’s reasoning process looks entirely plausible. Only the premises are wrong.

My Current Workaround: Assume the Next Agent Knows Nothing

I’ve been working toward one countermeasure: whenever there’s a task handoff — to the Agent in the next session, to a different Subagent, or even to tomorrow’s version of myself — I ask the Agent to consider this:

“I’m going to clear the context and hand things off to the next Agent. If that Agent has none of the context you have right now, can it reach the same conclusions you have, just from the files you’ve left behind?”

If the answer is “no,” the current Agent needs to work through:

- What information still needs to be added to the documents?

- What kind of startup prompt needs to be left behind?

- Is there anything “obvious” that actually needs to be written down explicitly?

This approach directly corresponds to the core principle of Context Engineering from article 3 — JIT (Just-In-Time) loading. It’s not enough that “I know this right now.” The question is: “At the moment this information is needed, will it be in the right place?”

The industry is moving in this direction too. OpenAI’s Agents SDK [13] has already implemented explicit Agent-to-Agent Handoff mechanisms — each Agent declares handoff targets, the framework enforces handoff paths, and it even supports input_filter to customize what context gets passed along. MCP and Google’s A2A protocol [14] have separately standardized Agent-to-Tool and Agent-to-Agent communication. An ICLR 2026 study [15] confirmed that structured memory retention via agent-specific context delivers SOTA results across multiple benchmarks in multi-Agent systems.

But here’s an important warning. “The Multi-Agent Trap” [16] points out that most “Agent failures” are actually orchestration and context-transfer problems at the handoff point — not model capability problems. Adding more Agents and more handoff points may be adding failure points, not solving problems. Another study [17] found that additional high-level narrative or behavioral context actually reduces reasoning accuracy. So handoff quality matters more than quantity. More handoff documentation isn’t better (remember Memory Bank from last year). What matters is writing the right things — the premises that the next Agent can’t infer on its own but needs to know in order to make decisions.

Rethinking Case 1 through this lens: if the Agent had been asked that question when writing the Spec, it would have realized that “the three Agents must run independently in parallel” is a constraint that can’t be inferred without the methodology document in context — and it would have proactively written that constraint into the Spec.

Rethinking Case 3: if the Agent had been asked that question when writing the policy, it would have recognized that “don’t touch those two files” was motivated by “wait for the merge” — not “permanently off-limits” — and it would have added an expiration condition to the policy.

/Tom: The Daily Context Handoff Ritual

I’ve turned this concept into a concrete command: /Tom (short for Tomorrow). At the end of every workday, I run /Tom with the Agent. This command asks the Agent to summarize what we did today, while also asking itself one question:

When summarizing all of today’s work, assume that the Agent starting tomorrow has zero context in common with you. Starting from that premise, review all the records and documents left from today, and confirm that tomorrow’s Agent can pick up seamlessly.

This isn’t just writing a work log. It’s a mandatory context audit:

- Surface implicit assumptions — which conclusions from today’s discussion exist in the conversation context but not in any document?

- Fill missing context — do those conclusions need to be written into a document? Where?

- Tag temporary constraints — were there any temporary decisions today? What are their expiration conditions?

- Update startup information — what should tomorrow’s Agent read first? Where does it start?

Run at end of each day"] S1["1. Surface implicit assumptions

Which conclusions only exist in the conversation

and not in any document?"] S2["2. Fill missing context

Does this need to be written into a document?

Where?"] S3["3. Tag temporary constraints

Any temporary decisions today?

What are their expiration conditions?"] S4["4. Update startup information

What should tomorrow's Agent read first?

Where does it start?"] RESULT(["Tomorrow's Agent

can pick up seamlessly"]) TOM --> S1 --> S2 --> S3 --> S4 --> RESULT style TOM fill:#f0eff7,stroke:#7a6b8a,stroke-width:3px style S1 fill:#f0f7f1,stroke:#6b8f71,stroke-width:2px style S2 fill:#f0f7f1,stroke:#6b8f71,stroke-width:2px style S3 fill:#fdf6f0,stroke:#c67a50,stroke-width:2px style S4 fill:#fdf6f0,stroke:#c67a50,stroke-width:2px style RESULT fill:#faf8f5,stroke:#2d2d2d,stroke-width:3px

The ICLR 2026 MemAgents research [18] proposes similar concepts: Agent memory systems need “write policies” (what should be written into memory), “temporal credit assignment” (tagging information with time-sensitivity), and “provenance-aware retrieval” (retrieval with source traceability). /Tom is essentially a manual version of all three — through an end-of-session ritual, it forces the Agent to execute a memory write policy, tag time-sensitivity, and trace information provenance.

Because if what was discussed today isn’t properly preserved, it will be permanently lost once this conversation is cleared. And tomorrow’s you will have to re-explain all of that context to a brand-new Agent from scratch.

Every session end is a context handoff. Skip the handoff, and you’re manufacturing “known” traps for tomorrow’s self.

Back to the Framework: This Isn’t a New Problem

If you’ve read from article 1 all the way here, you’ll recognize that what this article discusses isn’t actually new — it’s a practical expansion of the Context Engineering principles from article 3:

| Principle from Article 3 | Real-World Correspondence in This Article |

|---|---|

| JIT Loading | Information needs to appear at the right place at the moment it’s needed (Case 1) |

| Token Budgets | Having something in context isn’t enough — you also need to ensure it doesn’t get buried under noise (Case 2) |

| Progressive Disclosure | Information should be layered; temporary and permanent need to be distinguished (Case 3) |

And the “be explicit when it matters” principle from Tips 0 gets a more concrete expansion here — it’s not just about you being explicit with the Agent. The Agent also needs to be explicit with the next Agent. Your job is to build a mechanism that makes this happen automatically.

Context Engineering isn’t just about managing the Agent’s inputs — it’s about managing the Agent’s “known”: making implicit things explicit, and keeping temporary things from becoming permanent.

Three Takeaways from This Article

- An Agent’s known has an expiration date — an Agent’s known only lives within the current session. Any conclusion, constraint, or premise that wasn’t written into a document will disappear when the session ends. Google DeepMind’s memory research [1] has already pointed out that episodic → semantic memory compression loses the original context.

- Assume the handoff recipient knows nothing — this is the simplest and most effective verification. If they can’t see your context, can your documents get them to the same conclusions? Remember though: handoff quality matters more than quantity [16][17].

- Build a context handoff ritual — whether it’s /Tom, a handoff prompt, or your own approach, the point is to make this a habit — or better yet, a mechanism. Waiting until something breaks and then patching it may mean you’ve already missed a lot of things, or it may be too late entirely.

I hope this article is useful. In the next one, we’ll keep digging into practical lessons from working alongside Agents.

References

Agent Memory and Context Management:

- [1] “Memory in the Age of AI Agents: A Survey” (Google DeepMind et al., 2025/12) — episodic → semantic memory merging loses original context and conditions link

- [2] Anthropic, “Effective Context Engineering for AI Agents” (2025/09) — Agents need JIT loading rather than pre-loading all context link

- [3] “Building Effective AI Coding Agents for the Terminal” (Nghi D. Q. Bui, 2026/03) — scaffolding and harness phases require different context strategies link

Agent Overconfidence and Capability Boundaries:

- [4] “The Dunning-Kruger Effect in Large Language Models” (arXiv, 2026/03) — 24,000 experiments quantify LLM overconfidence; worst-performing models have the highest confidence link

- [5] “Taming Overconfidence in LLMs: Reward Calibration in RLHF” (OpenReview, 2025) — RLHF training itself causes models to be overconfident link

- [6] “Not Wrong, But Untrue: LLM Overconfidence in Document-Based Queries” (arXiv, 2025/09) — 30% of outputs contain hallucinations, mostly interpretive overconfidence link

- [7] “Rewarding Doubt: A Reinforcement Learning Approach to Calibrated Confidence Expression” (TU Munich et al., 2025/03) — calibration can be trained, but not yet widespread link

Temporal Reasoning and Temporary vs. Permanent:

- [8] “Real-Time Deadlines Reveal Temporal Awareness Failures in LLM Strategic Dialogues” (arXiv, 2026/01) — LLMs cannot reliably track the passage of time link

- [9] “Towards Neuro-Symbolic Temporal Reasoning for LLM-based Agents” (ACL Findings, 2025) — LLM performance degrades significantly with dense temporal information link

Agent Hallucination Taxonomy and Input-Side Hallucination:

- [10] “LLM-based Agents Suffer from Hallucinations: A Survey” (arXiv, 2025/09) — first Agent hallucination taxonomy survey, proposing sub-intention omission and related categories link

- [11] “When helpfulness backfires: LLMs and the risk of false information due to sycophantic behavior” (npj Digital Medicine, 2025) — LLMs show up to 100% compliance rate with illogical requests link

- [12] “Sycophancy Is Not One Thing: Causal Separation of Sycophantic Behaviors” (arXiv, 2025/09) — in 58.8% of cases, models acknowledge in their chain of thought that they are complying with a false premise link

Context Handoff and Multi-Agent Collaboration:

- [13] OpenAI Agents SDK — Handoffs mechanism (2025/03) — explicit control transfer and context filtering between Agents link

- [14] “Advancing Multi-Agent Systems Through Model Context Protocol” (arXiv, 2025/04) — MCP + A2A standardizes Agent-to-Agent communication link

- [15] “Intrinsic Memory Agents: Heterogeneous Multi-Agent LLM Systems through Structured Contextual Memory” (ICLR 2026) — structured context handoff improves multi-Agent effectiveness link

- [16] “The Multi-Agent Trap” (Towards Data Science, 2025) — most Agent failures originate at handoff points; more Agents may mean more failure points link

- [17] “Is More Context Always Better? Examining LLM Reasoning Capability for Time Interval Prediction” (arXiv, 2026/01) — additional context actually reduces reasoning accuracy link

- [18] ICLR 2026 Workshop Proposal: MemAgents — Agent memory needs write policies, temporal credit assignment, and provenance-aware retrieval link

Support This Series

If these articles have been helpful, consider buying me a coffee