In the previous article we talked about an Agent’s timezone blind spot [3] — the Agent let a 25-hour error compound for several days without noticing it at all. At the end of that piece, I said: if you really want an Agent to operate autonomously, what you need isn’t an Agent that nervously asks you about everything — it’s a methodology.

But “methodology” has become one of those overused words, almost like “framework.” Add the word “methodology” to anything and it suddenly sounds serious. But in most cases, when you open the file, it’s only an SOP.

Of course, what this article is about is absolutely not how to write SOPs. It’s about how to build a real methodology. It is a complete growth chain: from theory to methodology, from methodology to Playbook, from Playbook to execution plan. Each layer down is a transition from “general” to “specific,” from “possible” to “certain.” The lower the layer, the less flexibility it has, but the more executable it becomes.

This chain matters because most people, when working with an Agent, habitually jump straight to the bottom layer — handing the Agent a pile of instructions and saying “do it!” The Agent follows through, but the moment it hits a situation the instructions don’t cover, things fall apart. It doesn’t know “why it should do this,” so it easily makes the wrong judgment in a new situation. Or more accurately: it guesses wrong.

So how do you build this chain? Let’s walk through a real case and build it step by step.

First, What’s the Actual Difference Between Theory and Methodology?

Before the case study, let’s clarify a few terms and concepts that are constantly mixed up.

Theory answers “why.” It’s a systematic set of statements that explain phenomena [1], organizing observed relationships — the interactions between things — through concepts and logic. It tells you why the world works this way. But theory doesn’t tell you how to solve a problem with your hands. You may know the theory of gravity, but that still doesn’t tell you how to build a rocket that escapes Earth’s gravity.

Methodology answers how to approach something, and “why choose this approach.” It provides principles and rationale for choosing tools, processes, and steps — a framework that guides action. It’s closer to the ground than theory, but it’s not the ground itself. Agile tells you how to organize software development and iteration cycles, but it won’t write your Sprint Backlog for you.

Method is the grounded, concrete steps and tools — the thing you can read and then follow when you actually start working.

So the hierarchy is:

Theory → Methodology → Method → Execution [2]

Theory explains why, methodology guides how, method is the concrete steps, and execution is doing the work. If you want to “ship right now,” what you need is method-level material, because methodology only helps you choose methods, and theory helps you understand why a method works.

Explains why"] --> M["Methodology

Guides which approach"] M --> MT["Method

Concrete steps"] MT --> E["Execution

Hands-on work"]

Why bother distinguishing these three layers? Because most of what people give Agents tends to fall into either theory-level or method-level material. Theory-level material is too abstract, so the Agent doesn’t know how to execute. Method-level material is too specific, so the Agent gets lost when it hits an undefined new situation. What is rarely handed to the Agent is the middle layer: methodology. And methodology is exactly what enables an Agent to make reasonable judgments when facing situations it has never seen before.

A Monitoring System That Reported “All Clean”

A while back, I was assigned a task at work: build a monitoring system for an internal AI Agent platform.

This platform had many different Agents, each handling different responsibilities, all sharing the same Source of Truth (SOT). So we built a monitoring tool to check the platform’s security and governance compliance. After the first round of building and tuning, we had 22 checks running on a daily schedule, and every day the report came back: All Clean.

Sounds perfect? Remember what we’ve said before: when an Agent is quiet and everything looks perfect, that’s when you should worry most.

So I used a separate Agent, through a methodology, to audit it and discovered the platform had 51 known issues spanning 14 categories. Those 22 checks were only catching a small fraction — the actual detection rate was around 22%. In other words, nearly 80% of the problems were completely invisible to the monitoring system.

Worse still, some categories of issues (like cross-Agent data write conflicts, SQL injection risks, and transaction atomicity problems) didn’t even have corresponding checks in the monitoring system. It wasn’t that the checks were poorly written — there were simply no checks designed for that type of issue at all.

That’s the truth behind “All Clean”: your checks can only see what you designed them to see. If you didn’t design for it, you won’t see it, so the system says “no problem.”

The Agent’s First Instinct: Hand You a Research Plan

When I handed this problem to the Agent and asked it to analyze and propose improvements, it did something AI often does. After a round of “thinking,” it gave me a four-step research plan: first inventory all known issues (Ground Truth), then run a full validation pass, then classify the detection gaps, and finally define improvement targets.

At the method level, this plan was fine — the steps were clear and executable. But there was a fundamental problem:

Your research plan is built on coverage rate. Its upper bound comes from “the total number of known issue categories.” Once that number is capped, the Agent stops growing.

This is the ceiling problem — the improvement framework itself is constrained by its own target. Whether you push coverage from 22% to 80% or even 100%, once all known issues are detected, the system stops. But in the real world, new problems don’t stop appearing just because the monitoring report says 100%.

So I told the Agent:

We also need a corresponding mechanism — a separate loop to explore and discover new problems. This research plan should enable the entire system to achieve a self-growing closed loop, with continuous output, continuous correction, and continuous self-validation.

The Agent accepted this direction and started formalizing the approach, but before it continued, I told it: don’t rush into execution — first organize this thinking into a methodology.

“Your direction is good, but I want to first organize it into a closed-loop methodology. This methodology should be multi-layered and multi-dimensional. We’ll use it as the summary framework, then continue developing the closed loops from there.”

This “stop and organize” moment is the pivot from the method level back to the methodology level, because if we had charged straight into executing the Agent’s research plan, we’d probably hit the ceiling in three months and have to start all over again.

Three Interlocking Loops — The Architecture That Breaks Through the Ceiling

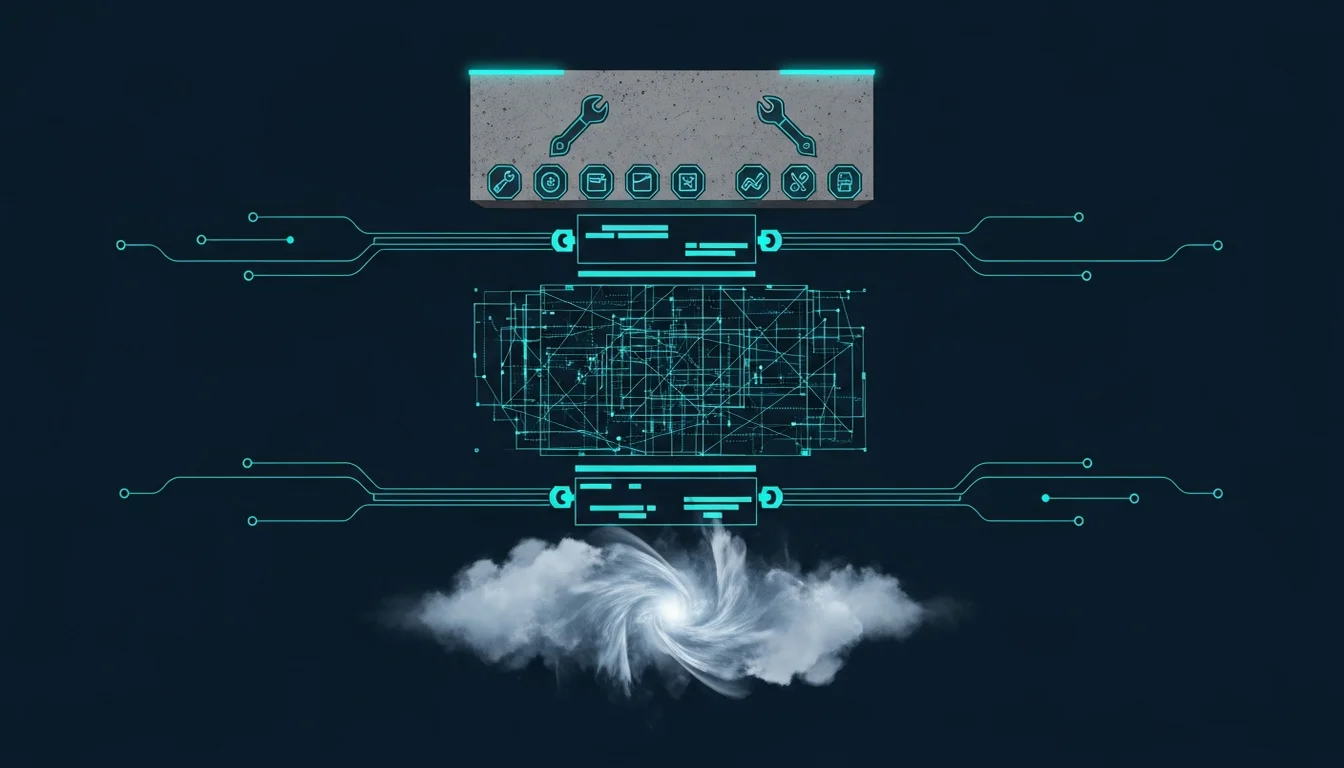

The Agent then formalized my direction into three interlocking feedback loops:

Detection Loop: Improves the quality and coverage of checks for known issues. This is what most people would do. Despite its name, it actually handles both detection and remediation in a closed loop.

Discovery Loop: Proactively explores unknown problems — running mutation tests on known issues, tracing root causes, making cross-domain inferences — so the scope of known issues keeps expanding, and through that, breaks through the ceiling.

Validation Loop: Verifies that the monitoring system itself has no bugs, because a buggy monitoring system is more dangerous than having no monitoring at all — because it gives you a false sense of security.

The three loops are “interlocking” because each loop’s output is fuel for the next, and none can modify its own baseline.

- The Detection Loop produces new checks, which become targets for the Discovery Loop’s mutation testing and regression test cases for the Validation Loop.

- The Discovery Loop produces newly identified issues, which become improvement targets for the Detection Loop and test instruments for the Validation Loop.

- The Validation Loop finds bugs in the monitoring system itself, which become fix items for the Detection Loop and blind-spot exploration seeds for the Discovery Loop.

The three loops feed each other, so the system keeps moving forward rather than finishing one cycle and stopping to wait for input.

Interestingly, the core logic of the Detection Loop directly borrows from a well-known existing framework: Karpathy’s autoresearch [4]. Its core concept is simple — modify code → evaluate with fixed metrics → if metrics improve, keep it (git commit), if not, discard it (git reset) → continue to the next iteration. It’s quite straightforward. So we only need to swap “modify code” for “modify check logic” and “metrics” for “detection rate,” and we can reuse this iteration framework directly, saving the cost of designing from scratch. (We didn’t adopt it wholesale because the metric evaluation approach differs; after modification, it would lose autoresearch’s automatic iteration advantage.)

But the most important thing in this entire section is that “wait, stop and organize first” moment. Agents are too used to working directly inside a small frame instead of stepping back to see the whole picture. If we had moved straight into execution, as described above, we would quickly hit the ceiling of the frame and have to start again.

By this point, the foundation of the methodology is largely in place, but I wanted to make sure it had no holes, so there is still some development ahead. Still, in this case, the thing most worth remembering is not the three-loop closure itself. It’s the act of stepping back to reorganize the big picture. With that step, we get a more complete methodology, because methodology is not a document you patch in after the work is done. It is something you should think through before you start working.

In the next article, we’ll look at what the three cores of this methodology are, what structural vulnerabilities it has, and how to turn methodology into a Playbook the Agent can act on.

References:

[1] Method vs Theory: Meaning And Differences — theory vs method distinction https://thecontentauthority.com/blog/method-vs-theory

[2] Methodology, Method, and Theory — Helen Kara — three-tier hierarchy https://helenkara.com/2018/02/15/methodology-method-and-theory/

[3] Tips 4: Agent’s Timezone Blind Spot — case from the previous article /en/articles/16-tips-agent-timezone-blind-spot

[4] Karpathy autoresearch — autonomous ML research framework https://github.com/karpathy/autoresearch

Support This Series

If these articles have been helpful, consider buying me a coffee