Mindset: Evolving to an Agent Team, and Discovering Human Value

In the previous two articles we walked through the first three stages of the workflow mindset: chaining workflows, designing Context handoffs, and the refinement and platform-thinking cycle. This article covers the second half of that journey — when a workflow starts to grow, where does it evolve? And throughout that process, what is the human role?

Stage Four: Evolving to an Agent Team

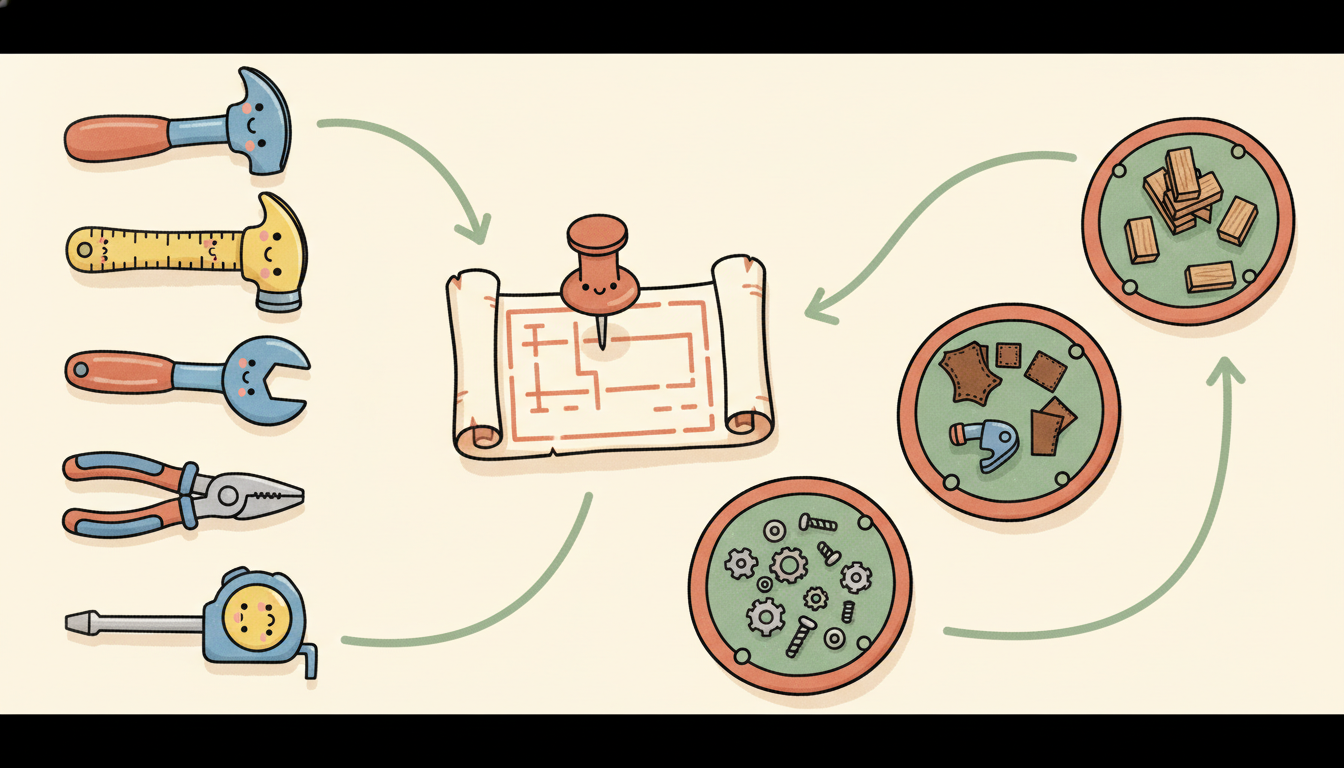

Through the stage-three cycle — diagnose, refine, classify — running over and over again, you will gradually improve your workflow. But at some point, the workflow will start evolving toward an Agent Team. You will notice that some Skills have become too complex and need their own Agent, some steps require a different set of tools, and some tasks need to run in parallel. This is exactly the scenario Article 7 described — moving from hub-and-spoke into a phase that requires collaboration.

At this point, there are two things to do.

First, revisit the workflow through the lens of the Agent Team concept. Go back to Article 7: which steps are a good fit for a Subagent (an independent task, finish and report back)? Which genuinely need an Agent Team (interdependent steps that require communication)? Don’t spin up an Agent Team just because it “feels more powerful.” Remember, the cost is 15x. The New Stack’s 2026 trend analysis [14] also notes that the industry expects most teams to adopt a three-stage model — human-prompted → agent-executed → human-reviewed — and that not everything requires an Agent Team. Most work can run perfectly well inside that three-stage model.

Second, separate working directories. I recommend putting different Agents in different working directories, each with its own CLAUDE.md, while also having a global shared CLAUDE.md. The benefits are: each Agent’s Context stays clean (irrelevant rules are never loaded), each Agent’s Skills and Tools can be managed independently, and the globally shared parts ensure consistency. The morphllm CLAUDE.md examples analysis [29] validates this pattern: CLAUDE.md supports a layered architecture — root level (project-wide), ~/.claude/CLAUDE.md (global), and parent directories (monorepo). This naturally supports the hierarchical governance structure of multiple Agents, which is essentially the layered strategy from Article 3’s Context Engineering, elevated from the token level to the workflow architecture level.

Stage Five: Expanding, and Discovering Human Value

After cycling through the first four stages repeatedly, you will notice something: what humans need to do is changing.

You used to spend large amounts of time writing code, writing tests, writing documentation. Now AI handles those things. But new work has appeared — you are doing workflow design, Context architecture, quality review, and anomaly judgment. MIT Technology Review’s analysis [13] describes this shift precisely: humans have been elevated to the role of supervisor, designer, and strategic decision-maker — setting goals, defining ethical boundaries, and handling genuinely novel, high-stakes challenges. Anthropic’s 2026 report [10] observes the same trend: human oversight is shifting from “review everything” to “review what matters.”

These newly emerging tasks can themselves be examined through the entire process described above: can this new task be taken over by AI? If yes, go back to stage one and wire it into the workflow. If not, you have found genuine human value within this chain of work. This iterative process is essentially doing two things simultaneously: refining the workflow — letting AI handle more and handle it better — and discovering human value — finding the judgment calls, creativity, and decisions that AI cannot replace.

But there is a very easy trap to fall into here: when you discover that some new task “can be taken over by AI,” the instinctive reaction is often to build a new Agent to handle it. Hold on.

Anthropic states this very clearly in the official guide “Building Effective Agents” [31]:

“Find the simplest workable solution, and only add complexity when necessary. This may mean not building an agentic system at all.”

A joint study by Google DeepMind and MIT [32] uses data to back this up: when a single Agent can already complete a task with >45% accuracy, adding more Agents produces diminishing — or even negative — returns, because the coordination overhead of managing the team outweighs the marginal gains. A Towards Data Science analysis based on 1,642 execution records [33] also found that coordination gains plateau after 4 Agents; beyond that count, overhead eats all the benefits.

So before you decide to “build a new Agent,” ask yourself one question first:

Is there a way to accomplish this by adjusting the existing architecture?

Concretely, you can check these lighter-weight options in order:

- Adjust Rules — if adding one rule lets the existing Agent handle it, you don’t need a new Agent

- Trim CLAUDE.md — maybe the problem isn’t lack of capability but a cluttered Context; trimming it may be enough

- Adjust a Skill — pack the new knowledge or methodology into an existing Skill rather than starting from scratch

- Adjust a Command / Skill entry — add a new entry point to trigger different behavior from the existing Agent (March 31, 2026 Update: Commands are being merged into Skills)

- Rezone Context areas — use layering or partitioning to let the existing Agent work in different Context states

LangChain’s multi-agent guide [34] also admits: rather than adding an Agent, get the context engineering right first — even Anthropic’s own multi-agent research system has its final synthesis step “deliberately handled by a single main Agent in one unified call.”

Only when you have confirmed that none of the above adjustments can solve the problem should you build a new Agent. This isn’t being conservative — it’s being pragmatic. Every additional Agent adds another coordination tax, another failure point, another line-item in token spending. It’s the YAGNI principle from software engineering (You Aren’t Gonna Need It): don’t build something you’re not sure you need yet, just to satisfy a hypothetical future requirement.

A Fortune 2026 analysis [21] defines three human-AI collaboration modes: Cyborg (humans and AI deeply merged, collaborating at every step), Centaur (humans selectively delegate specific sub-tasks to AI), and Self-Automator (automate as completely as possible). Most people will move between these three modes — some work fits Centaur, some fits Cyborg. The key is knowing clearly which mode you are in at any given moment, and why.

This is not a one-time conclusion. It is a continuously evolving process. As AI capabilities advance, this boundary will keep shifting. Something you feel only a human can do today may be delegatable to AI in six months — and six months from now, you will be doing new work you can’t even imagine today.

A Note on the “Illusion of Completeness”

The above is about “don’t rush to add Agents.” But there is an even more hidden trap: even without over-adding Agents, the workflow itself can produce the illusion that everything is fine.

RAD Security introduced the concept of “Cosmetic AI” [27]: tools look modern, summaries sound intelligent, but the underlying work has not actually advanced. This risk exists at two levels simultaneously. One is the output illusion: your workflow may “appear” to have automated many things, but in reality it has only buried the problems deeper. The other is the architecture illusion: your system has multiple Agents, a clean-looking topology, something that appears enterprise-grade, when in fact two Agents with better Skills would accomplish exactly the same thing.

The designative analysis [28] goes further: AI-generated high-fidelity output creates an “illusion of completeness” that causes reviews to focus on surface-level critique rather than deep strategic discussion.

The countermeasure: run a full system “stress test” on a regular basis. Not just checking whether each step is functioning correctly, but asking more fundamental questions:

- If one Agent returns a completely wrong result, can the downstream workflow detect it?

- If a Context source disappears, does the whole system degrade gracefully or crash immediately?

- Are there any Agents in this system that could actually be replaced with a simpler Rule, Skill, or Context adjustment?

- When was the last time someone manually reviewed the entire workflow from end to end?

At this point we have walked through all five stages — but if you start building a workflow right now, you will quickly hit two specific pitfalls, and academic research has confirmed that these are nearly impossible to avoid. MIT Press research [2] says LLMs cannot reliably self-correct; the ICSE 2025 paper [4] says all major LLMs will make errors recommending deprecated APIs — not “this might happen,” but “this will happen.”

So what do you do? In the next article, we cover the countermeasures for both pitfalls, the core principles running through this entire mindset, and the limits of this spiral you need to know about.

References

[2] Huang et al., “When Can LLMs Actually Correct Their Own Mistakes?” (MIT Press/TACL) https://direct.mit.edu/tacl/article/doi/10.1162/tacl_a_00713/125177

[4] “LLMs Meet Library Evolution” (ICSE 2025) — all tested LLMs recommended deprecated APIs https://dl.acm.org/doi/10.1109/ICSE55347.2025.00245

[10] Anthropic, “2026 Agentic Coding Trends Report” — 60% of work uses AI, 0–20% fully delegatable https://resources.anthropic.com/2026-agentic-coding-trends-report

[13] MIT Technology Review, “Human-AI Collaboration Roadmap” — human role shifts to supervisor and strategic decision-maker https://www.technologyreview.com/2025/12/05/1128730/harnessing-human-ai-collaboration-for-an-ai-roadmap-that-moves-beyond-pilots

[14] The New Stack, “5 Key Trends Shaping Agentic Development 2026” — human-prompted → agent-executed → human-reviewed https://thenewstack.io/5-key-trends-shaping-agentic-development-in-2026/

[21] Fortune, “Cyborg, Centaur, or Self-Automator” (2026) — three human-AI collaboration modes https://fortune.com/2026/01/30/ai-business-humans-in-the-loop-cyborg-centaur-or-self-automator/

[27] RAD Security, “The Cost of Cosmetic AI” — Cosmetic AI and the illusion of completeness https://www.radsecurity.ai/blog/the-cost-of-cosmetic-ai-why-gpt-wrappers-drain-more-than-they-deliver

[28] designative, “Rethinking Design Critiques in the Age of AI” — high-fidelity output masks deeper problems https://www.designative.info/2025/11/10/rethinking-design-critiques-in-the-age-of-ai-prototyping/

[29] morphllm, “CLAUDE.md Examples 2026” — CLAUDE.md layered architecture examples https://www.morphllm.com/claude-md-examples

[31] Anthropic, “Building Effective Agents” (2024) — exhaust simpler options first, add complexity only when necessary https://www.anthropic.com/research/building-effective-agents

[32] Google DeepMind + MIT, “Towards a Science of Scaling Agent Systems” (arXiv, 2025) — quantifying the coordination tax and diminishing returns of adding Agents https://arxiv.org/abs/2512.08296

[33] Towards Data Science, “Why Your Multi-Agent System is Failing: The 17x Error Trap” (2026) — Agent sprawl risk and the 4-Agent performance ceiling https://towardsdatascience.com/why-your-multi-agent-system-is-failing-escaping-the-17x-error-trap-of-the-bag-of-agents/

[34] LangChain, “How and When to Build Multi-Agent Systems” (2025) — when not to use multi-agent, context engineering first https://blog.langchain.com/how-and-when-to-build-multi-agent-systems/

Support This Series

If these articles have been helpful, consider buying me a coffee